December 28, 2021

The material on this website is freely available for educational purposes.

Requests for re-use of digital images: contact the UC Press.

Proper citation of the material found in these pages is:

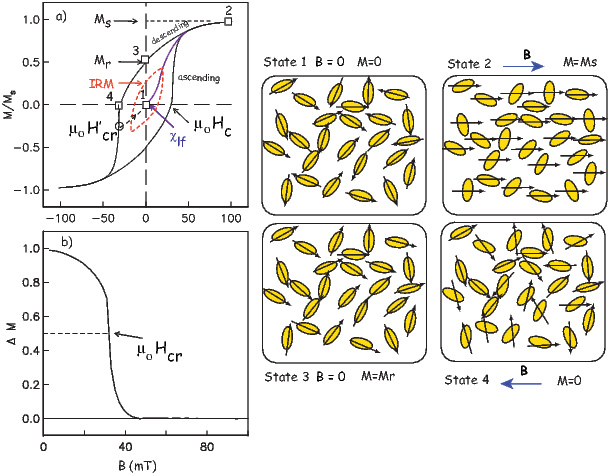

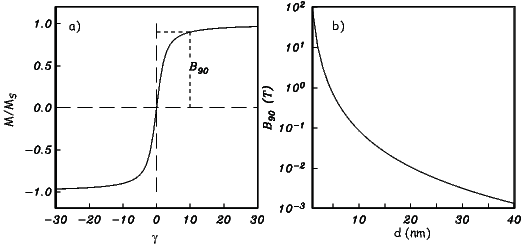

Tauxe, L, Banerjee, S.K., Butler, R.F. and van der Voo R, Essentials of Paleomagnetism, 5th Web Edition, 2018.

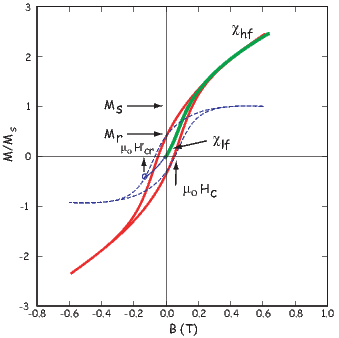

The printed version of this book appeared January, 2010. Order a printed version. (cheaper than printing it yourself!)

This book is intended to work with the companion software package described in PmagPy Cookbook.

This material is based upon work supported by the National Science Foundation.

1 Purpose of the book

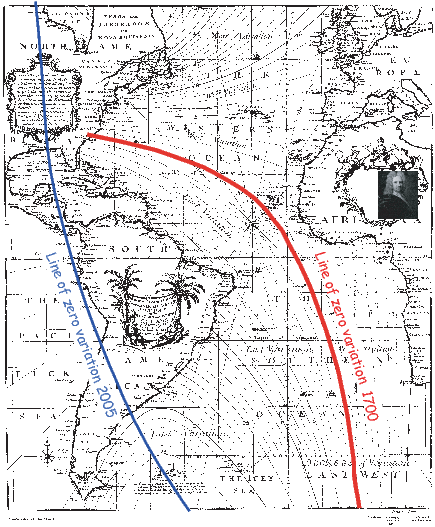

The geomagnetic field acts both as an umbrella, shielding us from cosmic radiation and as a window, offering one of the few glimpses of the inner workings of the Earth. Ancient records of the geomagnetic field can inform us about geodynamics of the early Earth and changes in boundary conditions through time. Thanks to its essentially dipolar nature, the geomagnetic field has acted as a guide, pointing to the axis of rotation thereby providing latitudinal information for both explorers and geologists.

Human measurements of the geomagnetic field date to about a millenium and are quite sparse prior to about 400 years ago. Knowledge of what the field has done in the past relies on accidental records carried by geological and archaeological materials. Teasing out meaningful information from such materials requires an understanding of the fields of rock magnetism and paleomagnetism, the subjects of this book. Rock and paleomagnetic data are useful in many applications in Earth Science in addition to the study of the ancient geomagnetic field. This book attempts to draw together essential rock magnetic theory and useful paleomagnetic techniques in a consistent and up-to-date manner. It was written for several categories of readers:

There are a number of excellent references on paleomagnetism and on the related specialties (rock magnetism and geomagnetism). The ever popular but now out of print text by Butler (1992) has largely been incorporated into the present text. For in-depth coverage of rock magnetism, we recommend Dunlop and Özdemir (1997). Similarly for geomagnetism, please see Backus et al. [1996]. A rigorous analysis of the statistics of spherical data is given by Fisher et al. (1987). The details of paleomagnetic poles are covered in van der Voo (1993) and magnetostratigraphy is covered in depth by Opdyke and Channell (1996). The Treatise in Geophysics, vol. 5 (edited by Kono, 2007) and The Encyclopedia of Geomagnetism and Paleomagnetism (edited by Gubbins and Herrero-Bervera, 2007) have up to date reviews of many topics covered in this book. The present book is intended to augment or distill information from the broad field of paleomagnetism, complementing the existing body of literature.

An important part of the problems in this book is to teach students to write simple computer programs themselves and use programs that are supplied as a companion set of software (PmagPy). The programming language chosen for this is Python because it is free, cross platform, open source and well supported. There are excellent online tutorials for Python and many open source modules which make software development cheaper and easier than any other programming environment. The appendix provides a brief introduction to programming and using Python. The reader is well advised to peruse the PmagPy Cookbook for further help in gaining necessary skills with a computer. Also, students should have access to a relatively new computer (Windows, Mac OS 10.4 or higher are supported, but other computers may also work.) Software installation is described at: magician.ucsd.edu/Software/PmagPy.

2 What is in the book

This book is a collaborative effort with contributions from R.F. Butler (Chapters 1, 3, 4, 6, 7, 9, 11 and the Appendix), S.K Banerjee (Chapter 8) and R. van der Voo (Chapter 16). The MagIC database team designed and deployed the MagIC database which we have made liberal use of in providing data for problem sets and in the writing of the PmagPy Cookbook, so there were significant contributions to this book project from C.G. Constable, A.A.P. Koppers and Rupert Minnett.

At the beginning of most chapters, there are recommended readings which will help fill in background knowledge. There are also suggested readings at the end of most chapters that will allow students to pursue the subject matter in more depth.

The chapters themselves contain the essential theory required to understand paleomagnetic research as well as illustrative applications. Each chapter is followed by a set of practical problems that challenge the student’s understanding of the material. Many problems use real data and encourage students to analyze the data themselves. [Solutions to the problems may be obtained from LT by instructors of classes using this book as a text.] The appendix contains detailed derivations, assorted techniques, useful tables and a comprehensive explanation of the PmagPy set of programs.

Chapter 1 begins with a review of the physics of magnetic fields. Maxwell’s equations are introduced where appropriate and the magnetic units are derived from first principles. The conversion of units between cgs and SI conventions is also discussed and summarized in a handy table.

Chapter 2 reviews essential aspects of the Earth’s magnetic field, discussing the geomagnetic potential, geomagnetic elements, and the geomagnetic reference fields. The various magnetic poles of the Earth are also introduced.

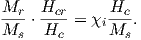

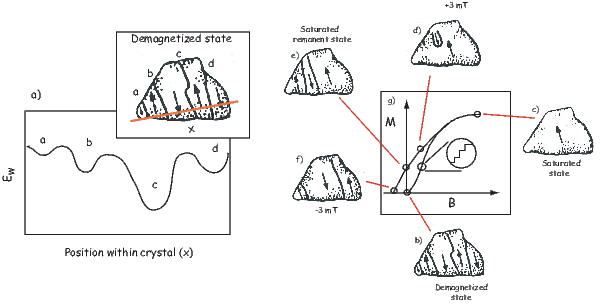

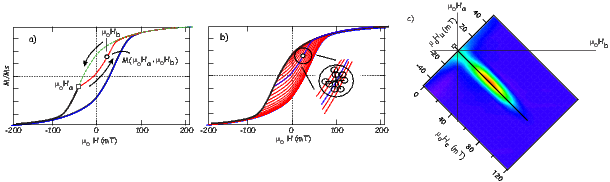

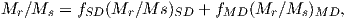

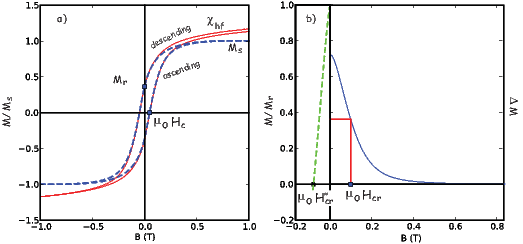

Chaptes 3-8 deal with rock and mineral magnetism. The most important aspect of rock magnetism to the working paleomagnetist is how rocks can become magnetized and how they can stay that way. In order to understand this, Chapter 3 presents a discussion of the origin of magnetism in crystals, including induced and remanent magnetism. Chapter 4 continues with an explanation of anisotropy energy, magnetic domains and superparamagnetism. Magnetic hysteresis is covered in Chapter 5. Chapter 6 deals with specific magnetic minerals and their properties, leading up to the origin of magnetic remanence in rocks, the topic of Chapter 7. Finally Chapter 8 deals with applied rock magnetism and environmental magnetism.

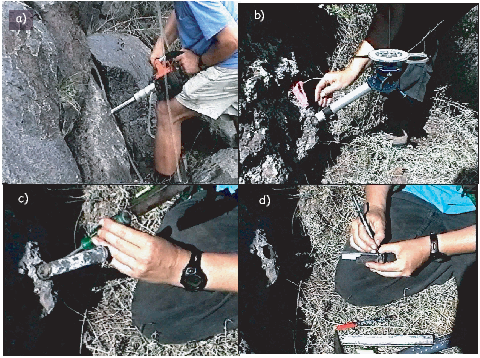

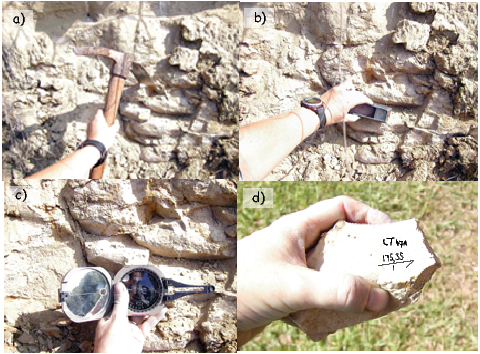

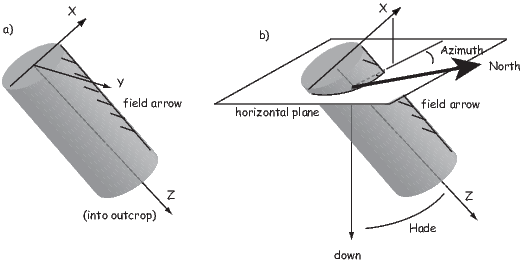

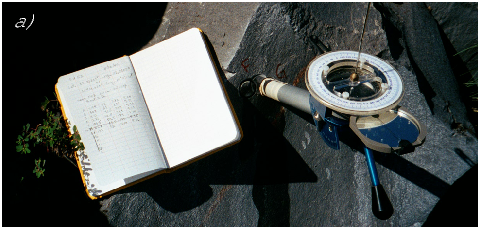

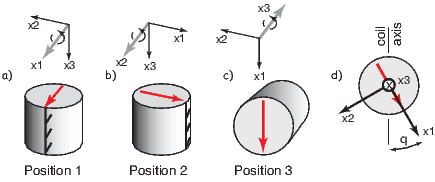

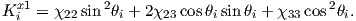

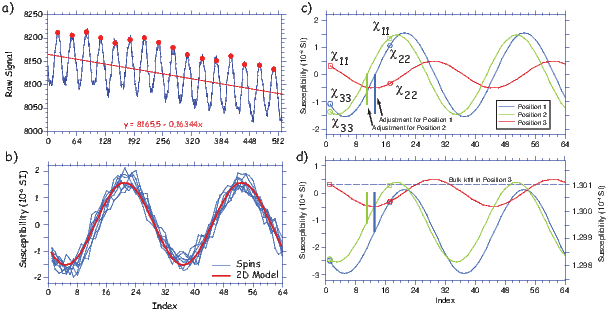

Chapters 9-13 delve into the nuts and bolts of paleomagnetic data acquisition and analysis. Chapter 9 suggests ways of sampling rocks in the field and methods for treating them in the laboratory to obtain a paleomagnetic direction. Various techniques for obtaining paleointensities are described in Chapter 10. Once the data are in hand, Chapters 11 and 12 deal with statistical methods for analyzing magnetic vectors. Paleomagnetic tensors are introduced in Chapter 13, which explains measurement and treatment of anisotropy data.

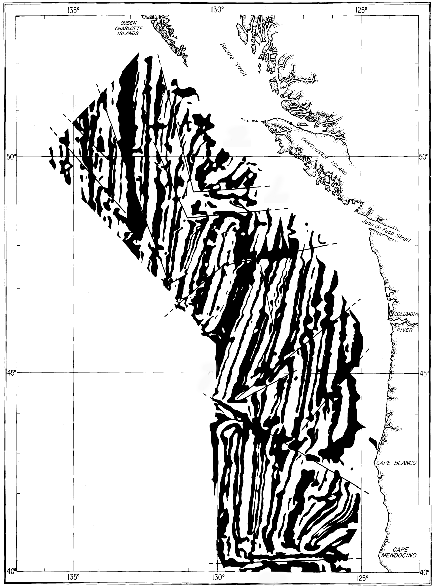

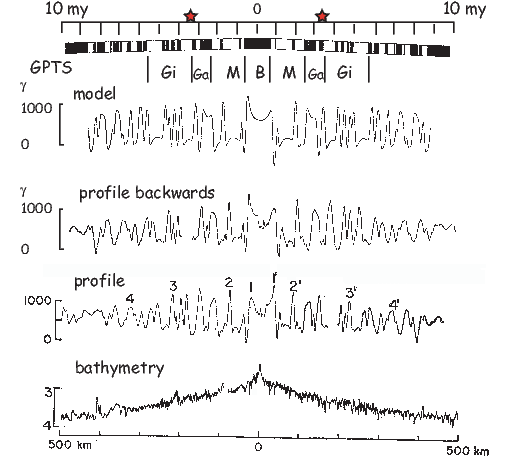

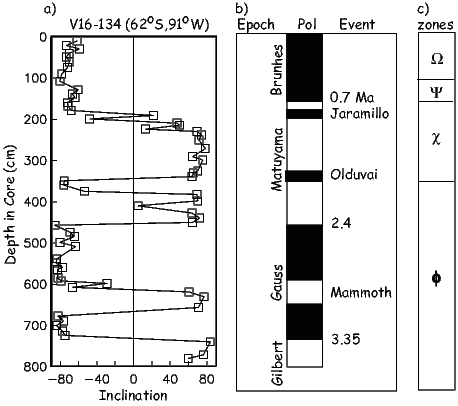

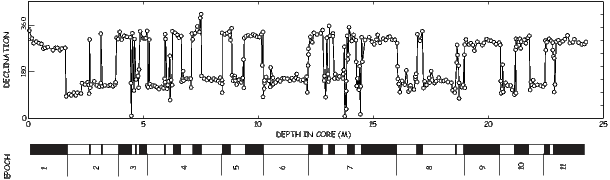

Chapters 14-16 illustrate diverse applications of paleomagnetic data. Chapter 14 shows how they are used to study the geomagnetic field. Chapter 15 describes the development of the geomagnetic polarity time scale and various applications of magnetostratigraphy. Chapter 16 focuses on apparent polar wander and tectonic applications.

The appendix contains more detailed information, included for supplemental background or useful techniques. It is divided into several sections: Appendix A summarizes various definitions and detailed derivations including various mathematical tricks such as vector and tensor operations. Appendix B.1 describes some plots commonly employed by paleomagnetists. Appendix C.2 collects together methods and tables useful in directional statistics. Appendix D describes techniques specific to the measurement and analysis of anisotropy data. The PmagPy Cookbook provides an introduction to the Magnetics Information Consortium (MagIC) database, the current repository for rock and paleomagnetic data and summarizes essential computer skills including basic Unix commands, an introduction to Python programming and extensive examples of programs in the PmagPy software package used in the problems at the end of each chapter.

3 How to use the book

Each chapter builds on the principles outlined in the previous chapters, so the reader is encouraged to work through the book sequentially. There are recommended readings before and after every chapter selected to provide backgound information and supplemental reading for the motivated reader respectively. These are meant to be optional.

The reader is encouraged to study Chapter 6 in the PmagPy Cookbook before beginning to work on the problems at the end of each chapter. The utility of the book will be greatly enhanced by successfully installing and using the programs referred to in the problems. By conscientiously trying them out as they are mentioned, the reader will not only gain familiarity with PmagPy software package, but also with the concepts discussed in the chapters.

We have attempted to maintain a consistent notation throughout the book. Vectors and tensors are in bold face; other parameters, including vector components, are in italics. The most important physical and paleomagnetic parameters, acronyms and statistics are listed in Appendix A.

The problems in this book are intended to be solved with Jupyter Notebooks in conjunction with the PmagPy software of Tauxe et al., (2016). To get ready for this, you must do the following steps:

The problems at the end of each chapter assume proficiency in both Python programming and the use of Jupyter notebooks. See Python Programming for Earth Scientists for a complete course.

For examples on how to use PmagPy in a Jupyter notebook, see PmagPy.ipynb in your data_files directory.

4 Acknowledgements

LT is the primary author of this book who bears sole responsibility for all mistakes. There are significant contributions by RFB, SKB and RvdV. We are indebted to many people for assistance great and small. This book began life as a set of lecture notes based loosely on the earlier book by Tauxe (1998). Many pairs of eyes hunted down errors in the text and the programs each time the course was given. The course was also occasionally co-taught with Cathy Constable and Jeff Gee who contributed significantly to the development of the manuscript and the proof-reading there-of. Thanks go to the many “live” and “online” students who patiently worked through various drafts. Special thanks go to Kenneth Yuan, Liu Cy , Maxwell Brown and Michael Wack who provided many detailed comments and helpful suggestions. Reviews by Ken Kodama, Brad Clement, Scott Bogue and Cor Langereis improved the book substantially. Also, careful proof-reading by Newlon Tauxe of the first few chapters is greatly appreciated. And of course, I am deeply grateful to my mentors, Dennis V. Kent and Neil D. Opdyke who taught me how to do science.

I owe a debt of gratitude to the many sources of public domain software that ended up in the package PmagPy, including contributions by Cathy Constable, Monika Korte, Jeff Gee, Peter Selkin, Ron Shaar, Nick Swanson-Hysell, Ritayan Mitra and especially Lori Jonestrask, as well as the many dedicated contributors to the Numpy, Matplotlib, Basemap, Cartopy, and Pandas Python modules used extensively by PmagPy. Also, many illustrations were prepared with the excellent programs Magmap, Contour and Plotxy by Robert L. Parker, to whom I remain deeply grateful. I gratefully acknowledge the authors of many earlier books, too many to name but included in the Bibliography, which both educated and inspired me.

Finally, I am grateful to my husband, Hubert Staudigel, and my children, Philip and Daniel Staudigel who have long tolerated my obsession with paleomagnetism with grace and good humor and frequently good advice.

Paleomagnetism is the study of the magnetic properties of rocks. It is one of the most broadly applicable disciplines in geophysics, having uses in diverse fields such as geomagnetism, tectonics, paleoceanography, volcanology, paleontology, and sedimentology. Although the potential applications are varied, the fundamental techniques are remarkably uniform. Thus, a grounding in the basic tools of paleomagnetic data analysis can open doors to many of these applications. One of the underpinnings of paleomagnetic endeavors is the relationship between the magnetic properties of rocks and the Earth’s magnetic field.

In this chapter we will review the basic physical principles behind magnetism: what are magnetic fields, how are they produced and how are they measured? Although many find a discussion of scientific units boring, much confusion arose when paleomagnetists switched from “cgs” to the Système International (SI) units and mistakes abound in the literature. Therefore, we will explain both unit systems and look at how to convert successfully between them. There is a review of essential mathematical tricks in Appendix A to which the reader is referred for help.

Magnetic fields, like gravitational fields, cannot be seen or touched. We can feel the pull of the Earth’s gravitational field on ourselves and the objects around us, but we do not experience magnetic fields in such a direct way. We know of the existence of magnetic fields by their effect on objects such as magnetized pieces of metal, naturally magnetic rocks such as lodestone, or temporary magnets such as copper coils that carry an electrical current. If we place a magnetized needle on a cork in a bucket of water, it will slowly align itself with the local magnetic field. Turning on the current in a copper wire can make a nearby compass needle jump. Observations like these led to the development of the concept of magnetic fields.

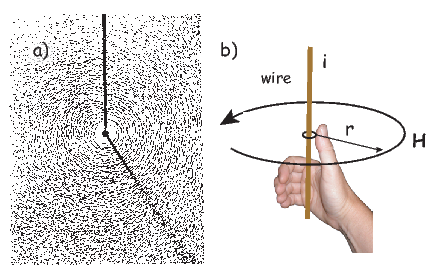

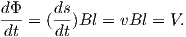

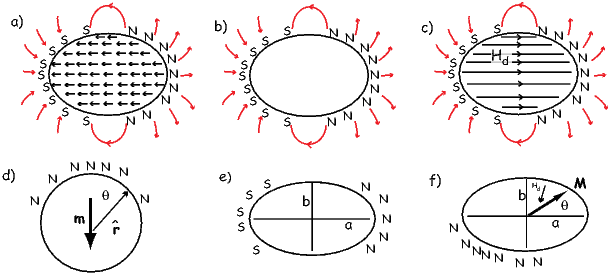

Electric currents make magnetic fields, so we can define what is meant by a “magnetic field” in terms of the electric current that generates it. Figure 1.1a is a picture of what happens when we pierce a flat sheet with a wire carrying a current i. When iron filings are sprinkled on the sheet, the filings line up with the magnetic field produced by the current in the wire. A loop tangential to the field is shown in Figure 1.1b, which illustrates the right-hand rule (see inset to Figure 1.1b). If your right thumb points in the direction of (positive) current flow (the direction opposite to the flow of the electrons), your fingers will curl in the direction of the magnetic field.

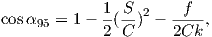

The magnetic field H points at right angles to both the direction of current flow and to the radial vector r in Figure 1.1b. The magnitude of H (denoted H) is proportional to the strength of the current i. In the simple case illustrated in Figure 1.1b the magnitude of H is given by Ampère’s law:

where r is the length of the vector r. So, now we know the units of H: Am−1.

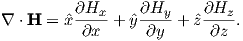

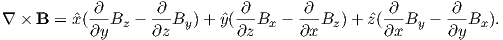

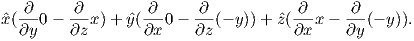

Ampère’s Law in its most general form is one of Maxwell’s equations of electromagnetism: in a steady electrical field, ∇× H = Jf, where Jf is the electric current density (see Section A.3.6 in the appendix for review of the ∇ operator). In words, the curl (or circulation) of the magnetic field is equal to the current density. The origin of the term “curl” for the cross product of the gradient operator with a vector field is suggested in Figure 1.1a in which the iron filings seem to curl around the wire.

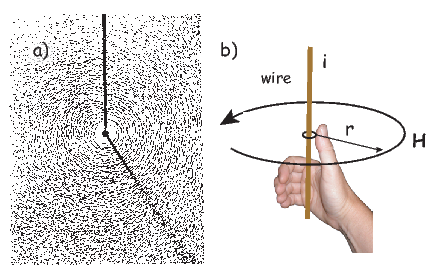

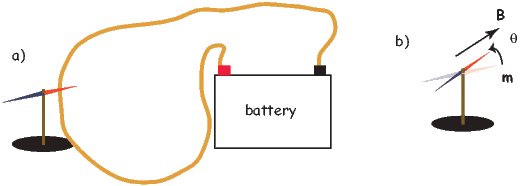

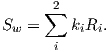

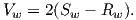

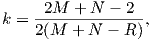

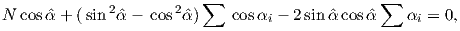

An electrical current in a wire produces a magnetic field that “curls” around the wire. If we bend the wire into a loop with an area πr2 that carries a current i (Figure 1.2a), the current loop would create the magnetic field shown by pattern of the iron filings. This magnetic field is that same as the field that would be produced by a permanent magnet. We can quantify the strength of that hypothetical magnet in terms of a magnetic moment m (Figure 1.2b). The magnetic moment is created by a current i and also depends on the area of the current loop (the bigger the loop, the bigger the moment). Therefore, the magnitude of the moment can by quantified by m = iπr2. The moment created by a set of loops (as shown in Figure 1.2c) would be the sum of the n individual loops, i.e.:

| (1.1) |

So, now we know the units of m: Am2. In nature, magnetic moments are carried by magnetic minerals the most common of which are magnetite and hematite (see Chapter 6 for details).

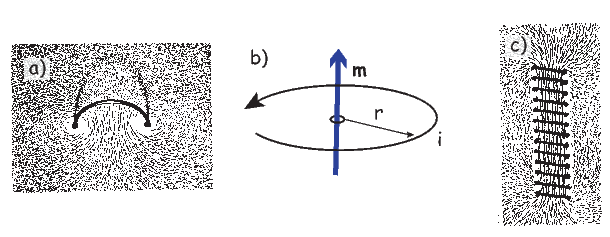

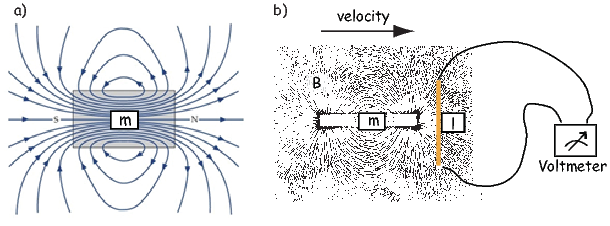

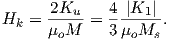

The magnetic field is a vector field because at any point it has both direction and magnitude. Consider the field of the bar magnet in Figure 1.3a. The direction of the field at any point is given by the arrows while the strength depends on how close the field lines are to one another. The magnetic field lines represent magnetic flux. The density of flux lines is one measure of the strength of the magnetic field: the magnetic induction B.

Just as the motion of electrically charged particles in a wire (a current) create a magnetic field (Ampère’s Law), the motion of a magnetic field creates electric currents in nearby wires. The stronger the magnetic field, the stronger the current in the wire. We can therefore measure the strength of the magnetic induction (the density of magnetic flux lines) by moving a conductive wire through the magnetic field (Figure 1.3b).

Magnetic induction can be thought of as something that creates a potential difference with voltage V in a conductor of length l when the conductor moves relative to the magnetic induction B with velocity v (see Figure 1.3b): V = vlB. From this we can derive the units of magnetic induction: the tesla (T). One tesla is the magnetic induction that generates a potential of one volt in a conductor of length one meter when moving at a rate of one meter per second. So now we know the units of B: V ⋅ s ⋅ m−2 = T.

Another way of looking at B is that if magnetic induction is the density of magnetic flux lines, it must be the flux Φ per unit area. So an increment of flux dΦ is the field magnitude B times the increment of area dA. The area here is the length of the wire l times its displacement ds in time dt. The instantaneous velocity is dv = ds∕dt so dΦ = BdA and the rate of change of flux is:

| (1.2) |

Equation 1.2 is known as Faraday’s law and in its most general form is the fourth of Maxwell’s equations. We see from Equation 1.2 that the units of magnetic flux must be a volt-second which is a unit in its own right: the weber (Wb). The weber is defined as the amount of magnetic flux which, when passed through a one-turn coil of a conductor carrying a current of one ampere, produces an electric potential of one volt. This definition suggests a means to measure the strength of magnetic induction and is the basis of the “fluxgate” magnetometer.

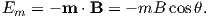

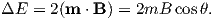

A magnetic moment m in the presence of a magnetic field B has a magnetostatic energy (Em) associated with it. This energy tends to align compass needles with the magnetic field (see Figure 1.4). Em is given by −m ⋅ B or −mB cosθ where m and B are the magnitudes of m and B, respectively (see Section A.3.4 in the appendix for review of vector multiplication). Magnetic energy has units of joules and is at a minimum when m is aligned with B.

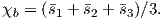

Magnetization M is a normalized moment (Am2). We will use the symbol M for volume normalization (units of Am−1) or Ω for mass normalization (units of Am2kg−1). Volume normalized magnetization therefore has the same units as H, implying that there is a current somewhere, even in permanent magnets. In the classical view (pre-quantum mechanics), sub-atomic charges such as protons and electrons can be thought of as tracing out tiny circuits and behaving as tiny magnetic moments. They respond to external magnetic fields and give rise to an induced magnetization. The relationship between the magnetization induced in a material MI and the external field H is defined as:

| (1.3) |

The parameter χb is known as the bulk magnetic susceptibility of the material; it can be a complicated function of orientation, temperature, state of stress, time scale of observation and applied field, but is often treated as a scalar. Because M and H have the same units, χb is dimensionless. In practice, the magnetic response of a substance to an applied field can be normalized by volume (as in Equation 1.3) or by mass or not normalized at all. We will use the symbol κ for mass normalized susceptibility and K for the raw measurements (see Table 1.1) when necessary.

Certain materials can produce magnetic fields in the absence of external magnetic fields (i.e., they are permanent magnets). As we shall see in later chapters, these so-called “spontaneous” magnetic moments are also the result of spins of electrons which, in some crystals, act in a coordinated fashion, thereby producing a net magnetic field. The resulting spontaneous magnetization can be fixed by various mechanisms and can preserve records of ancient magnetic fields. This remanent magnetization forms the basis of the field of paleomagnetism and will be discussed at length in subsequent chapters.

B and H are closely related and in paleomagnetic practice, both B and H are referred to as the “magnetic field”. Strictly speaking, B is the induction and H is the field, but the distinction is often blurred. The relationship between B and H is given by:

| (1.4) |

where μ is a physical constant known as the permeability. In a vacuum, this is the permeability of free space, μo. In the SI system, μ has dimensions of henries per meter and μo is

4π × 10−7H ⋅ m−1. In most cases of paleomagnetic interest, we are outside the magnetized body so M = 0 and B = μoH.

So far, we have derived magnetic units in terms of the Système International (SI). In practice, you will notice that people frequently use what are known as cgs units, based on centimeters, grams and seconds. You may wonder why any fuss would be made over using meters as opposed to centimeters because the conversion is trivial. With magnetic units, however, the conversion is far from trivial and has been the source of confusion and many errors. So, in the interest of clearing things up, we will briefly outline the cgs approach to magnetic units.

The derivation of magnetic units in cgs is entirely different from SI. The approach we will take here follows that of Cullity (1972). We start with the concept of a magnetic pole with strength p instead of with current loops as we did for SI units. We will consider the force between two poles p1,p2 (see Figure 1.5) Coulomb’s law. This states that the force between two charges (q1,q2) is:

| (1.5) |

where r is the distance between the two charges. In cgs units, the proportionality constant k is simply unity, whereas in SI units it is 1 __ 4πϵ0 where ϵ0 = 107 _ 4πc2 and c is the speed of light in a vacuum (hence ϵ0 = 8.859 ⋅ 10−12 AsV−1m−1). [You can see why many people really prefer cgs but we are not allowed to publish in cgs in most of geophysical journals so we just must grin and bear it!]

For magnetic units, we use pole strength p1,p2 in units of electrostatic units or esu, so Equation 1.5 becomes

Force in cgs is in units of dynes (dyn), so

A magnetic pole, as an isolated electric charge, would create a magnetic induction μoH in the space around it. One unit of field strength (defined as one oersted or Oe) is the unit of field strength that exerts a force of one dyne on a unit of pole strength. The related induction (μoH) has units of gauss or G.

The relationship between force, pole and magnetic field is written as:

Returning to the lines of force idea developed for magnetic fields earlier, let us define the oersted to be the magnetic field which would produce an induction with one unit of induction per square centimeter. Imagine a sphere with a radius r surrounding the magnetic monopole. The surface area of such a sphere is 4πr2. When the sphere is a unit sphere (r = 1) and the field strength at the surface is 1 Oe, then there must be a magnetic flux of 4π units of induction passing through it.

You will have noticed the use of the permeability of free space μo in the above treatment – a parameter missing in many books and articles using the cgs units. The reason for this is that μo is unity in cgs units and simply converts oersteds (H) to gauss (B = μoH). Therefore in cgs units, B and H are used interchangeably. We inserted it in this derivation to remind us that there IS a difference and that the difference becomes very important when we convert to SI because μo is not unity, but 4π x 10−7! For conversion between commonly used cgs and SI paramters, please refer to Table 1.1.

Proceeding to the notion of magnetic moment, from a cgs point of view, we start with a magnet of length l with two poles of strength p at each end. Placing the magnet in a field μoH, we find that it experiences a torque Γ proportional to p,l and H such that

| (1.6) |

Recalling our earlier discussion of magnetic moment, you will realize that pl is simply the magnetic moment m. This line of reasoning also makes clear why it is called a “moment”. The units of torque are energy, which are ergs in cgs, so the units of magnetic moment are technically erg per gauss. But because of the “silent” μo in cgs, magnetic moment is most often defined as erg per oersted We therefore follow convention and define the “electromagnetic unit” (emu) as being one erg ⋅ oe−1. [Some use emu to refer to the magnetization (volume normalized moment, see above), but this is incorrect and a source of a lot of confusion.]

| Parameter | SI unit | cgs unit | Conversion | |

| Magnetic moment (m) | Am2 | emu | 1 A m2 = 103 emu | |

| Magnetization | ||||

| by volume (M) | Am−1 | emu cm−3 | 1 Am−1 = 10−3 emu cm−3 | |

| by mass (Ω) | Am2kg−1 | emu gm−1 | 1 Am2kg−1 = 1 emu gm−1 | |

| Magnetic Field (H) | Am−1 | Oersted (oe) | 1 Am−1 = 4π x 10−3 oe | |

| Magnetic Induction (B) | T | Gauss (G) | 1 T = 104 G | |

| Permeability | ||||

| of free space (μo) | Hm−1 | 1 | 4π x 10−7 Hm−1 = 1 | |

| Susceptibility | ||||

| total (K:mH) | m3 | emu oe−1 | 1 m3 = 106 4π emu oe−1 | |

| by volume ( χ: M H) | - | emu cm−3 oe−1 | 1 S.I. = 1 _ 4π emu cm−3 oe−1 | |

| by mass (κ:mm ⋅ 1 _ H) | m3kg −1 | emu g−1 oe−1 | 1 m3kg−1 = 103 4π emu g−1 oe−1 | |

1 H = kg m2A−2s−2, 1 emu = 1 G cm3, B = μoH (in vacuum), 1 T = kg A−1 s−2

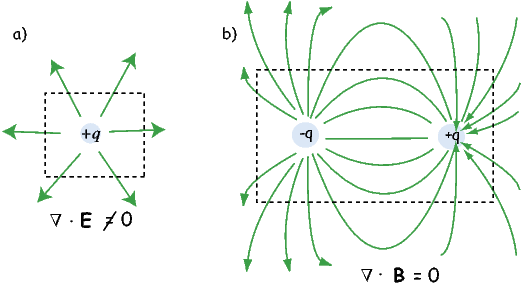

An isolated electrical charge produces an electrical field that begins at the source (the charge) and spread (diverge) outward (see Figure 1.6a). Because there is no return flux to an oppositely charged “sink”, there is a net flux out of the dashed box shown in the figure. The divergence of the electrical field is defined as ∇⋅ E which quantifies the net flux (see Appendix A.3.6 for more). In the case of the field around an electric charge, the divergence is non-zero.

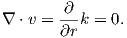

Magnetic fields are different from electrical fields in that there is no equivalent to an isolated electrical charge; there are only pairs of “opposite charges” – magnetic dipoles. Therefore, any line of flux starting at one magnetic pole, returns to its sister pole and there is no net flux out of the box shown in Figure 1.6b; the magnetic field has no divergence (Figure 1.6b). This property of magnetic fields is another of Maxwell’s equations: ∇⋅ B = 0.

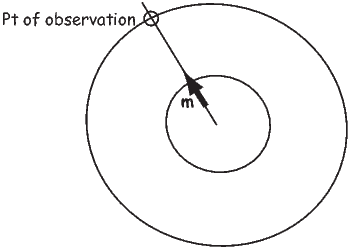

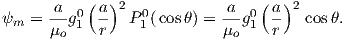

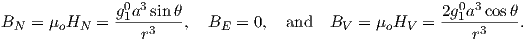

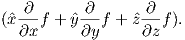

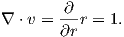

In the special case away from electric currents and magnetic sources (so B = μoH), the magnetic field can be written as the gradient of a scalar field that is known as the magnetic potential, ψm, i.e.,

The presence of a magnetic moment m creates a magnetic field which is the gradient of some scalar field. To gain a better intuitive feel about the relationship between scalar fields and their gradient vector fields, see Appendix A.3.6. Because the divergence of the magnetic field is zero, by definition, the divergence of the gradient of the scalar field is also zero, or ∇2ψm = 0. The operator ∇2 is called the Laplacian and ∇2ψm = 0 is Laplace’s equation. This will be the starting point for spherical harmonic analysis of the geomagnetic field discussed briefly in Chapter 2.

The curl of the magnetic field (∇×H) depends on the current density and is not always zero and magnetic fields cannot generally be represented as the gradient of a scalar field. Laplace’s equation is only valid outside the magnetic sources and away from currents.

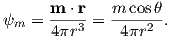

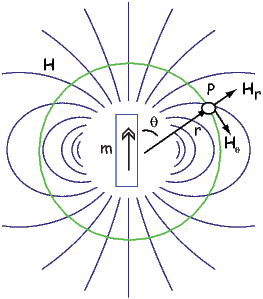

So what is this magnetic potential and how does it relate to the magnetic moments that give rise to the magnetic field? Whatever it is, it has to satisfy Laplace’s equation, so we turn to solutions of Laplace’s equation for help. One solution is to define the magnetic potential ψm as a function of the vector r with radial distance r and the angle θ from the moment. Given a dipole moment m, a solution to Laplace’s equation is:

| (1.7) |

You can verify this by making sure that∇2ψm = 0.

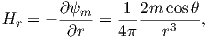

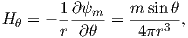

The radial (Hr) and tangential (Hθ) components of H at P (Figure 1.7) then would be:

| (1.8) |

respectively.

Measurement and description of the geomagnetic field and its spatial and temporal variations constitute one of the oldest geophysical disciplines. However, our ability to describe the field far exceeds our understanding of its origin. All plausible theories involve generation of the geomagnetic field within the fluid outer core of the Earth by some form of magnetohydrodynamic dynamo. Attempts to solve the full mathematical complexities of magnetohydrodynamics succeeded only in 1995 (Glatzmaier and Roberts, 1995).

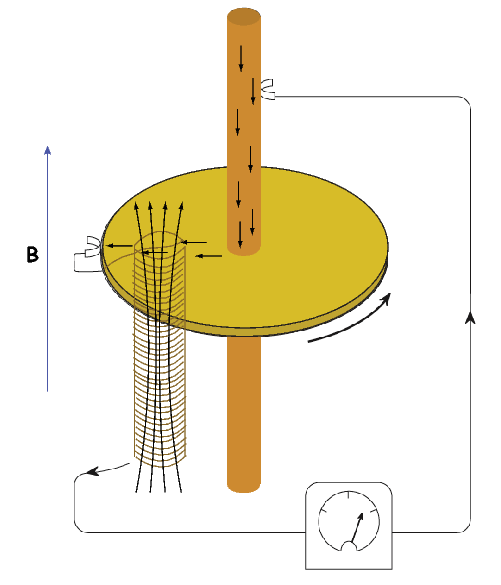

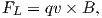

Quantitative treatment of magnetohydrodynamics is (mercifully) beyond the scope of this book, but we can provide a qualitative explanation. The first step is to gain some appreciation for what is meant by a self-exciting dynamo. Maxwell’s equations tell us that electric and changing magnetic fields are closely linked and can affect each other. Moving an electrical conductor through a magnetic field will cause electrons to flow, generating an electrical current. This is the principle of electric motors. A simple electromechanical disk-dynamo model such as that shown in Figure 1.8 contains the essential elements of a self-exciting dynamo. The model is constructed with a copper disk rotating attached to an electrically conducting (e.g., brass) axle. An initial magnetic induction field, B, is perpendicular to the copper disk in an upward direction. Electrons in the copper disk experience a push from the magnetic field known as the Lorentz force, FL, when they pass through the field.

The Lorentz force is given by:

| (1.9) |

where q is the electrical charge of the electrons, and v is their velocity. The Lorentz force on the electrons is directed toward the axle of the disk and the resulting electrical current flow is toward the outside of the disk (Figure 1.8).

Brush connectors are used to tap the electrical current from the disk, and the current passes through a coil under the disk. This coil is cleverly wound so that the electrical current produces a magnetic induction field in the same direction as the original field. The electrical circuit is a positive feedback system that reinforces the original magnetic induction field. The entire disk-dynamo model is a self-exciting dynamo. As long as the disk keeps rotating, the electrical current will flow, and the magnetic field will be sustained even if the original field disappears.

With this simple model we encounter the essential elements of any self-exciting dynamo:

More complicated setups using two disks whose fields interact with one another generate chaotic magnetic behavior that can switch polarities even if the mechanical motion remains steady. Certainly no one proposes that systems of disks and feedback coils exist in the Earth’s core. But interaction between the magnetic field and the electrically conducting iron-nickel alloy in the outer core can produce a positive feedback and allow the Earth’s core to operate as a self-exciting magnetohydrodynamic dynamo. For reasonable electrical conductivities, fluid viscosity, and plausible convective fluid motions in the Earth’s outer core, the fluid motions can regenerate the magnetic field that is lost through electrical resistivity. There is a balance between fluid motions regenerating the magnetic field and loss of magnetic field because of electrical resistivity. The dominant portion of the geomagnetic field detectable at the surface is essentially dipolar with the axis of the dipole nearly parallel to the rotational axis of the Earth. Rotation of the Earth must therefore be a controlling factor on the time-averaged fluid motions in the outer core. It should also be pointed out that the magnetohydrodynamic dynamo can operate in either polarity of the dipole. Thus, there is no contradiction between the observation of reversals of the geomagnetic dipole and magnetohydrodynamic generation of the geomagnetic field. However, understanding the special interactions of fluid motions and magnetic field that produce geomagnetic reversals is a major challenge.

As wise economists have long observed, there is no free lunch. The geomagnetic field is no exception. Because of ohmic dissipation of energy, there is a requirement for energy input to drive the magnetohydrodynamic fluid motions and thereby sustain the geomagnetic field. Estimates of the power (energy per unit time) required to generate the geomagnetic field are about 1013 W (roughly the output of 104 nuclear power plants). This is about one fourth of the total geothermal flux, so the energy involved in generation of the geomagnetic field is a substantial part of the Earth’s heat budget.

Many sources of this energy have been proposed, and ideas on this topic have changed over the years. The energy sources that are currently thought to be most reasonable are a combination of cooling of the Earth’s core with attendant freezing of the outer core and growth of the solid inner core. The inner core is pure iron, while the liquid outer core is some 15% nickel (and probably has trace amounts of other elements as well). The freezing of the inner core therefore generates a bouyancy force as the remaining liquid becomes more enriched in the lighter elements. These energy sources are sufficient to power the fluid motions of the outer core required to generate the geomagnetic field.

SUPPLEMENTAL READINGS: Jiles (1991), Chapter 1; Cullity (1972), Chapter 1.

Problem 1

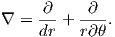

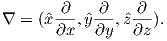

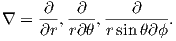

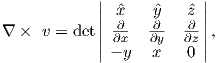

In axisymmetric spherical coordinates, ∇ (the gradient operator) is given by

We also know that

and that ψm is a scalar function of position:

Find the radial and tangential components of H if m is 80 ZAm2, [remember that “Z” stands for Zeta which stands for 1021], r is 6 x 106 m and θ is 45o. What are these field values in terms of B (teslas)?

Write your answers in a markdown cell in a jupyter notebook using latex syntax.

Problem 2

a) In your Jupyter notebook, write Python functions to convert induction, moment and magnetic field quantities in cgs units to SI units. Use the conversion factors in Table 1.1. Use your function to convert the following from cgs to SI:

i) B = 3.5 x105 G

ii) m = 2.78 x 10−20 G cm3

iii) H = 128 oe

b) In a new code block, modify your function to allow conversion from cgs => SI or SI => cgs. Rerun it to convert your answers from a) back to cgs.

HINTS: Call the functions with the values of B, m and H and have the function return the converted values. In the modified functions, you can specify whether the conversion is from cgs or SI.

Problem 3

Figure 1.9 shows a meridional cross section through the Earth in the plane of a magnetic dipole source m. At the location directly above the dipole, the field from the dipole is directed vertically downward and has intensity 10 μT. The dipole source is placed at 3480 km from the center of the Earth. Assume a mean Earth radius of 6370 km. Adapt the geometry of Figure 1.7 and the equations describing the magnetic field of a dipole to the model dipole in Figure 1.9.

a) Calculate the magnetic dipole moment of the model dipole. Remember to keep track of your units!

b) Compare this field to the total field produced by a centered axial magnetic dipole moment (i.e., one that is pointing straight up and is in the center of the circles) equivalent to that of the present geomagnetic field (m ∼ 80 ZAm2; Z=1021). Assume a latitude for the point of observation of 60∘. [HINT: the angle θ in Equation 1.10 is the co-latitude, not the latitude.]

| (1.10) |

Problem 4

Knowing that B = μoH, work out the fundamental units of μo in SI units. Prepare your answer in a markdown cell in your Jupyter notebook.

The part of the geomagnetic field of interest to paleomagnetists is generated by convection currents in the liquid outer core of the Earth which is composed of iron, nickel and some unknown lighter component(s). The source of energy for this convection is not known for certain, but is thought to be partly from cooling of the core and partly from the bouyancy of the iron/nickel liquid outer core caused by freezing out of the pure iron inner core. Motions of this conducting fluid are controlled by the bouyancy of the liquid, the spin of the Earth about its axis and by the interaction of the conducting fluid with the magnetic field (in a horribly non-linear fashion). Solving the equations for the fluid motions and resulting magnetic fields is a challenging computational task. Recent numerical models, however, show that such magnetohydrodynamical systems can produce self-sustaining dynamos which create enormous external magnetic fields.

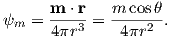

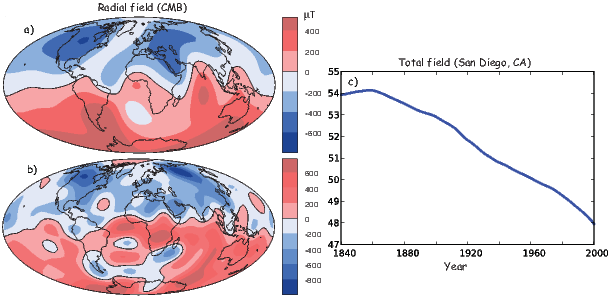

The magnetic field of a dipole aligned along the spin axis and centered in the Earth (a so-called geocentric axial dipole, or GAD) is shown in Figure 2.1a. [See Chapter 1 for a derivation of how to find the radial and tangential components of such a field.] By convention, the sign of the Earth’s dipole is negative, pointing toward the south pole as shown in Figure 2.1a and magnetic field lines point toward the north pole. They point downward in the northern hemisphere and upward in the southern hemisphere.

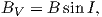

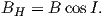

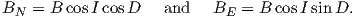

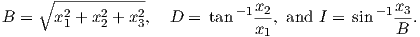

Although dominantly dipolar, the geomagnetic field is not perfectly modeled by a geocentric axial dipole, but is somewhat more complicated (see Figure 2.1b). At the point on the surface labeled ‘P’, the geomagnetic field points nearly north and down at an angle of approximately 60∘. Vectors in three dimensions are described by three numbers and in many paleomagnetic applications, these are two angles (D and I) and the strength (B) as shown in Figure 2.1b and c. The angle from the horizontal plane is the inclination I; it is positive downward and ranges from +90∘ for straight down to -90∘ for straight up. If the geomagnetic field were that of a perfect GAD field, the horizontal component of the magnetic field (BH in Figure 2.1b) would point directly toward geographic north. In most places on the Earth there is a deflection away from geographic north and the angle between geographic and magnetic north is the declination, D (see Figure 2.1c). D is measured positive clockwise from North and ranges from 0 → 360∘. [Westward declinations can also be expressed as negative numbers, i.e., 350∘ = -10∘.] The vertical component (BV in Figure 2.1b,c) of the geomagnetic field at P, is given by

| (2.1) |

and the horizontal component BH (Figure 2.1c) by

| (2.2) |

BH can be further resolved into north and east components (BN and BE in Figure 2.1c) by

| (2.3) |

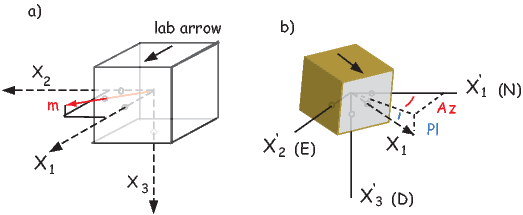

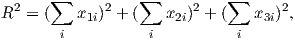

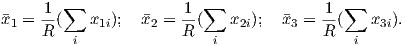

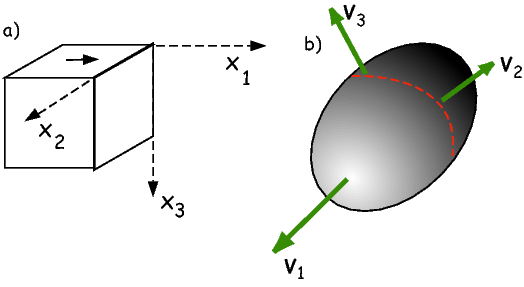

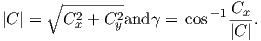

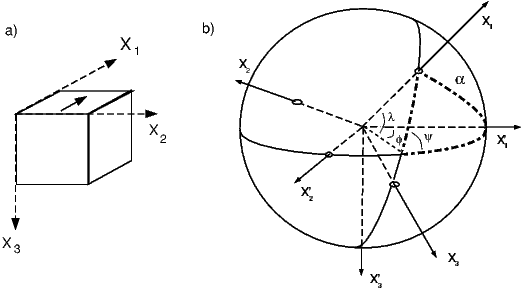

Depending on the particular problem, some coordinate systems are more suitable to use because they have the symmetry of the problem built into them. We have just defined a coordinate system using two angles and a length (B,D,I) and the equivalent Cartesian coordinates of (BN,BE,BV ). We will need to convert among them at will. There are many names for the Cartesian coordinates. In addition to north, east and down, they could also be x,y,z or even x1,x2 and x3. The convention used in this book is that axes are denoted X1,X2,X3, while the components along the axes are frequently designated x1,x2,x3. In the geographic frame of reference, positive X1 is to the north, X2 is east and X3 is vertically down in keeping with the right-hand rule. To convert from Cartesian coordinates to angular coordinates (B,D,I):

| (2.4) |

Be careful of the sign ambiguity of the tangent function. You may well end up in the wrong quadrant and have to add 180∘; this will happen if both x1 and x2 are negative. In most computer languages, there is a function atan2 which takes care of this, but most hand calculators will not. Remember that most computer languages expect angles to be given in radians, not degrees, so multiply degrees by π∕180 to convert to radians. Note also that in place of B for magnetic induction with units of tesla as a measure of vector length, (see Chapter 1), we could also use H, M ( both Am−1) or m (Am2) for magnetic field, magnetization or magnetic moment respectively.

We can measure declination, inclination and intensity at different places around the globe, but not everywhere all the time. Yet it is often handy to be able to predict what these components are. For example, it is extremely useful to know what the deviation is between true North and declination in order to find our way with maps and compasses. In principle, magnetic field vectors can be derived from the magnetic potential ψm as we showed in Chapter 1. For an axial dipolar field, there is but one scalar coefficient (the magnetic moment m of a dipole source). For the geomagnetic field, there are many more coefficients, including not just an axial dipole aligned with the spin axis, but two orthogonal equatorial dipoles and a whole host of more complicated sources such as quadrupoles, octupoles and so on. A list of coefficients associated with these sources allows us to calculate the magnetic field vector anywhere outside of the source region. In this section, we outline how this might be done.

As we learned in Chapter 1, the magnetic field at the Earth’s surface can be calculated from the gradient of a scalar potential field (H = −∇ψm), and this scalar potential field satisfies Laplace’s Equation:

| (2.5) |

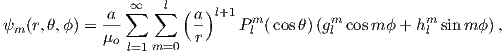

For the geomagnetic field (ignoring external sources of the magnetic field which are in any case small and transient), the potential equation can be written as:

| (2.6) |

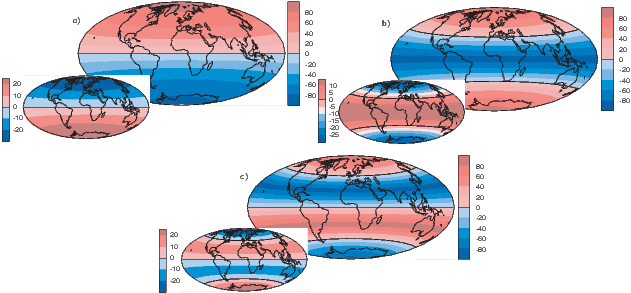

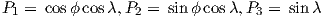

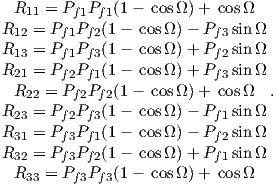

where a is the radius of the Earth (6.371 × 106 m). In addition to the radial distance r and the angle away from the pole θ, there is ϕ, the angle around the equator from some reference, say, the Greenwich meridian. Here, θ is the co-latitude and ϕ is the longitude. The glms and hlms are the gauss coefficients (degree l and order m) for hypothetical sources at radii less than a calculated for a particular year. These are normally given in units of nT. The Plms are wiggly functions called partially normalized Schmidt polynomials of the argument cosθ. These are closely related to the associated Legendre polynomials. [When m = 0 the Schmidt and Legendre polynomials are identical.] The first few of Plms are:

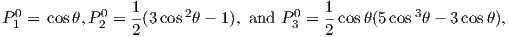

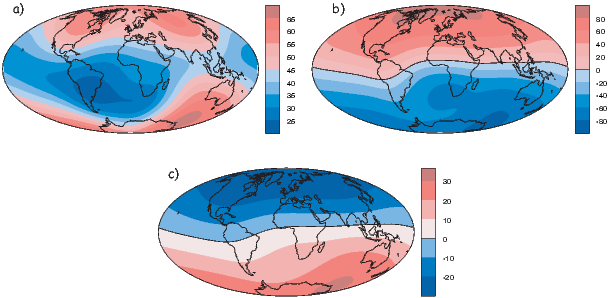

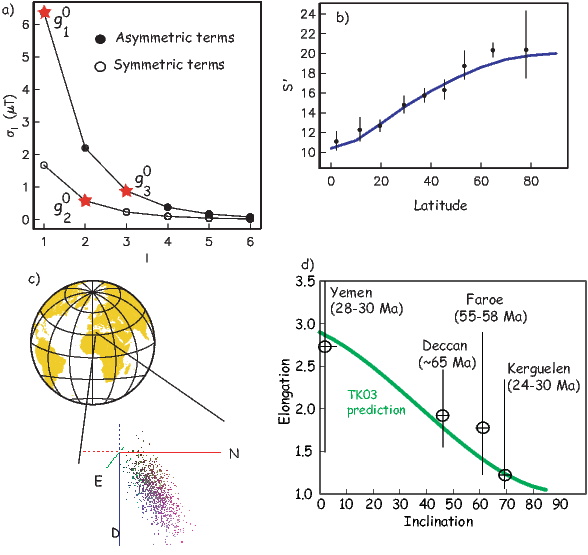

To get an idea of how the gauss coefficients in the potential relate to the associated magnetic fields, we show three examples in Figure 2.3. We plot the inclinations of the vector fields that would be produced by the terms with g10,g20 and g30 respectively. These are the axial (m = 0) dipole (l = 1), quadrupole (l = 2) and octupole (l = 3) terms. The associated potentials for each harmonic are shown in the insets.

In general, terms for which the difference between the subscript (l) and the superscript (m) is odd (e.g., the axial dipole g10 and octupole g30) produce magnetic fields that are antisymmetric about the equator, while those for which the difference is even (e.g., the axial quadrupole g20) have symmetric fields. In Figure 2.3a we show the inclinations produced by a purely dipolar field of the same sign as the present day field. The inclinations are all positive (down) in the northern hemisphere and negative (up) in the southern hemisphere. In contrast, inclinations produced by a purely quadrupolar field (Figure 2.3b) are down at the poles and up at the equator. The map of inclinations produced by a purely axial octupolar field (Figure 2.3c) are again asymmetric about the equator with vertical directions of opposite signs at the poles separated by bands with the opposite sign at mid-latitudes.

As noted before, there is not one, but three dipole terms in Equation 2.6, the

axial term (g10) and two equatorial terms (g11 and h11). Therefore, the total

dipole contribution is the vector sum of these three or  . The

total quadrupole contribution (l = 2) combines five coefficients and the total

octupole (l = 3) contribution combines seven coefficients.

. The

total quadrupole contribution (l = 2) combines five coefficients and the total

octupole (l = 3) contribution combines seven coefficients.

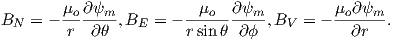

So how do we get this marvelous list of gauss coefficients? If you want to know the details, please refer Langel (1987). We will just give a brief introduction here. Recalling Chapter 1, once the scalar potential ψm is known, the components of the magnetic field can be calculated from it. We solved this for the radial and tangential field components (Hr and Hθ) in Chapter 1. We will now change coordinate and unit systems and introduce a third dimension (because the field is not perfectly dipolar). The north, east, and vertically down components are related to the potential ψm by:

| (2.7) |

where r, θ, ϕ are radius, co-latitude (degrees away from the North pole) and longitude, respectively. Here, BV is positive down, BE is positive east, and BN is positive to the north, the opposite of Hr and Hθ as defined in Chapter 1. Note that Equation 2.7 is in units of induction, not Am−1 if the units for the gauss coefficients are in nT, as is the current practice.

Going backwards, the gauss coefficients are determined by fitting Equations 2.7 and 2.6 to observations of the magnetic field made by magnetic observatories or satellite for a particular time. The International (or Definitive) Geomagnetic Reference Field or I(D)GRF, for a given time interval is an agreed upon set of values for a number of gauss coefficients and their time derivatives. IGRF (or DGRF) models and programs for calculating various components of the magnetic field are available on the internet from the National Geophysical Data Center; the address is http://www.ngdc.noaa.gov. there is also a program igrf.py included in the PmagPy package (see igrf.py documentation).

In practice, the gauss coefficients for a particular reference field are estimated by least-squares fitting of observations of the geomagnetic field. You need a minimum of 48 observations to estimate the coefficients to l = 6. Nowadays, we have satellites which give us thousands of measurements and the list of generation 10 of the IGRF for 2005 goes to l = 13.

| l | m | g( nT) | h (nT) | l | m | g( nT) | h (nT) |

| 1 | 0 | -29442.0 | 0 | 5 | 0 | -232.6 | 0 |

| 1 | 1 | -1501.0 | 4797.1 | 5 | 1 | 360.1 | 47.3 |

| 2 | 0 | -2445.1 | 0 | 5 | 2 | 192.4 | 197.0 |

| 2 | 1 | 3012.9 | -2845.6 | 5 | 3 | -140.9 | -119.3 |

| 2 | 2 | 1676.7 | -641.9 | 5 | 4 | -157.5 | 16.0 |

| 3 | 0 | 1350.7 | 0 | 5 | 5 | 4.1 | 100.2 |

| 3 | 1 | -2352.3 | -115.3 | 6 | 0 | 70.0 | 0 |

| 3 | 2 | 1225.6 | 244.9 | 6 | 1 | 67.7 | -20.8 |

| 3 | 3 | 582.0 | -538.4 | 6 | 2 | 72.7 | 33.2 |

| 4 | 0 | 907.6 | 0 | 6 | 3 | -129.9 | 58.9 |

| 4 | 1 | 813.7 | 283.3 | 6 | 4 | -28.9 | -66.7 |

| 4 | 2 | 120.4 | -188.7 | 6 | 5 | 13.2 | 7.3 |

| 4 | 3 | -334.9 | 180.9 | 6 | 6 | -70.9 | 62.6 |

| 4 | 4 | 70.4 | -329.5 | ||||

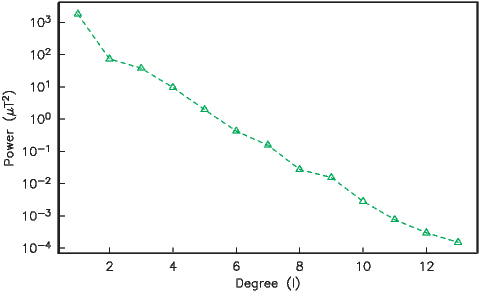

In order to get a feel for the importance of the various gauss coefficients, take a look at Table 2.1, which has the Schmidt quasi-normalized gauss coefficients for the first six degrees from the IGRF for 2005. The power at each degree is the average squared field per spherical harmonic degree over the Earth’s surface and is calculated by Rl = ∑ m(l + 1)[(glm)2 + (hlm)2] (Lowes, 1974). The so-called Lowes spectrum is shown in Figure 2.4. It is clear that the lowest order terms (degree one) totally dominate, constituting some 90% of the field. This is why the geomagnetic field is often assumed to be equivalent to a magnetic field created by a simple dipole at the center of the Earth.

The beauty of using the geomagnetic potential field is that the vector field can be evaluated anywhere outside the source region. Using the values for a given reference field in Equations 2.6 and 2.7, we can calculate values of B,D and I at any location on Earth. Figure 2.1b shows the lines of flux predicted from the 2005 IGRF from the core-mantle boundary up. We can see that the field becomes simpler and more dipolar as we move from the core mantle boundary to the surface. Yet, there is still significant non-dipolar structure in the geomagnetic field even at the Earth’s surface.

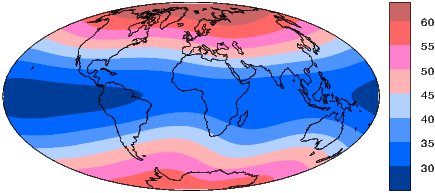

We can recast the vectors at the surface of the Earth into maps of components as shown in Figure 2.5a,b. We show the potential in Figure 2.5c for comparison with that of a pure dipole (inset to Figure 2.3a). These maps illustrate the fact that the field is a complicated function of position on the surface of the Earth. The intensity values in Figure 2.5a are, in general, highest near the poles (∼ 60 μT) and lowest near the equator (∼ 30 μT), but the contours are not straight lines parallel to latitude as they would be for a field generated strictly by a geocentric axial dipole (GAD) (e.g, Figure 2.1a). Similarly, a GAD would produce lines of inclination that vary in a regular way from -90∘ to +90∘ at the poles, with 0∘ at the equator; the contours would parallel the lines of latitude. Although the general trend in inclination shown in Figure 2.5b is similar to the GAD model, the field lines are more complicated, which again suggests that the field is not perfectly described by a geocentric bar magnet.

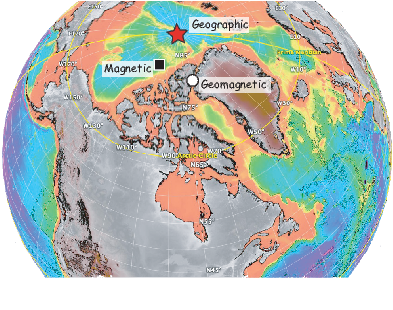

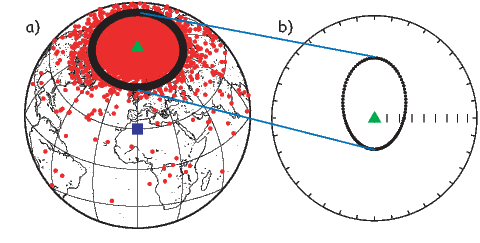

Perhaps the most important result of spherical harmonic analysis for our purposes is that the field at the Earth’s surface is dominated by the degree one terms (l = 1) and the external contributions are very small. The first order terms can be thought of as geocentric dipoles that are aligned with three different axes: the spin axis (g10) and two equatorial axes that intersect the equator at the Greenwich meridian (h10) and at 90∘ East (h11). The vector sum of these geocentric dipoles is a dipole that is currently inclined by about 10∘ to the spin axis. The axis of this best-fitting dipole pierces the surface of the Earth at the circle in Figure 2.6. This point and its antipode are called geomagnetic poles. Points at which the field is vertical (I = ±90∘ shown by a square in Figure 2.6) are called magnetic poles, or sometimes dip poles. These poles are distinguishable from the geographic poles, where the spin axis of the Earth intersects its surface. The northern geographic pole is shown by a star in Figure 2.6.

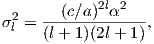

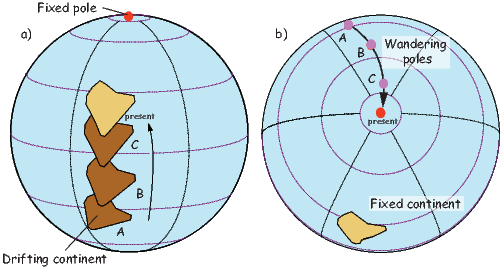

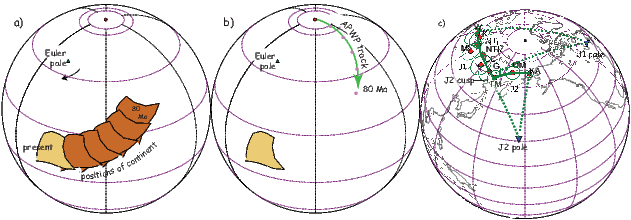

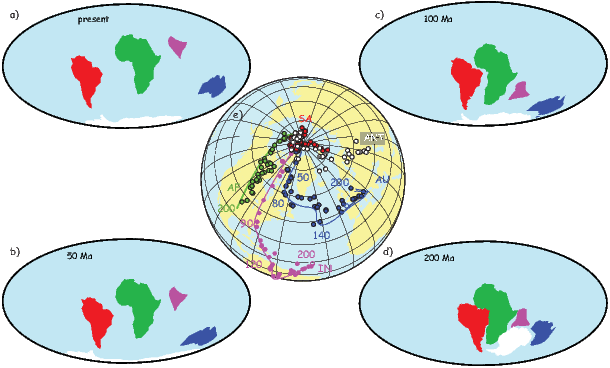

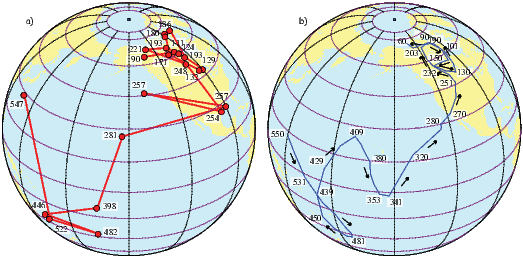

It turns out that when averaged over sufficient time, the geomagnetic field actually does seem to be approximately a GAD field, perhaps with a pinch of g20 thrown in (see e.g., Merrill et al., 1996). The GAD model of the field will serve as a useful crutch throughout our discussions of paleomagnetic data and applications. Averaging ancient magnetic poles over enough time to average out secular variation (thought to be 104 or 105 years) gives what is known as a paleomagnetic pole; this is usually assumed to be co-axial with the Earth’s geographic pole (the spin axis).

Because the geomagnetic field is axially dipolar to a first approximation, we can write:

| (2.8) |

Note that g10 is given in nT in Table 2.1. Thus, from Equation 2.8,

| (2.9) |

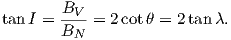

Given some latitude λ on the surface of the Earth in Figure 2.1a and using the equations for BV and BN, we find that:

| (2.10) |

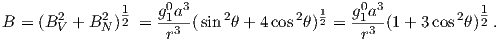

This equation is sometimes called the dipole formula and shows that the inclination of the magnetic field is directly related to the co-latitude (θ) for a field produced by a geocentric axial dipole (or g10). The dipole formula allows us to calculate the latitude of the measuring position from the inclination of the (GAD) magnetic field, a result that is fundamental in plate tectonic reconstructions. The intensity of a dipolar magnetic field is also related to (co)latitude because:

| (2.11) |

The dipole field intensity has changed by more than an order of magnitude in the past and the dipole relationship of intensity to latitude turns out to be not useful for tectonic reconstructions.

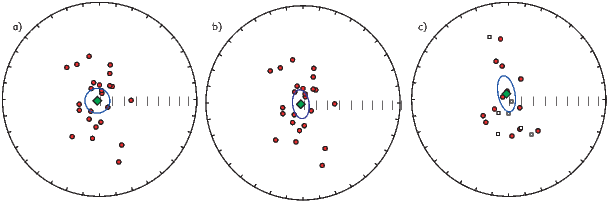

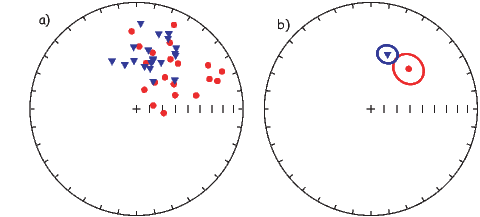

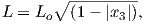

Magnetic field and magnetization directions can be visualized as unit vectors anchored at the center of a unit sphere. Such a unit sphere is difficult to represent on a 2-D page. There are several popular projections, including the Lambert equal area projection which we will be making extensive use of in later chapters. The principles of construction of the equal area projection are covered in the Appendix B.1.

In general, regions of equal area on the sphere project as equal area regions on this projection, as the name implies. Plotting directional data in this way enables rapid assessment of data scatter. A drawback of this projection is that circles on the surface of a sphere project as ellipses. Also, because we have projected a vector onto a unit sphere, we have lost information concerning the magnitude of the vector. Finally, lower and upper hemisphere projections must be distinguished with different symbols. The paleomagnetic convention is: lower hemisphere projections (downward directions) use solid symbols, while upper hemisphere projections are open.

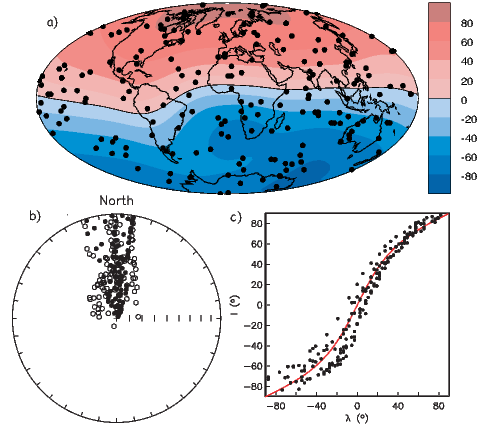

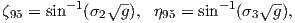

The dipole formula allows us to convert a given measurement of I to an equivalent magnetic co-latitude θm:

| (2.12) |

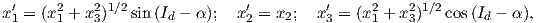

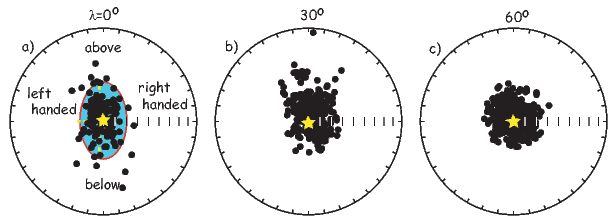

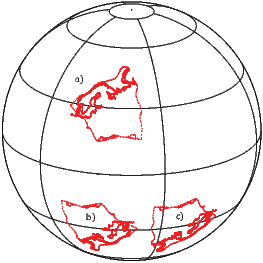

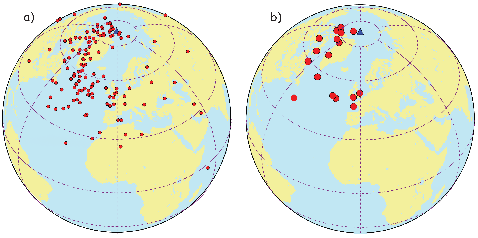

If the field were a simple GAD field, θm would be a reasonable estimate of θ, but non-GAD terms can invalidate this assumption. To get a feel for the effect of these non-GAD terms, we consider first what would happen if we took random measurements of the Earth’s present field (see Figure 2.7). We evaluated the directions of the magnetic field using the IGRF for 2005 at 200 positions on the globe (shown in Figure 2.7a). These directions are plotted in Figure 2.7b using the paleomagnetic convention of open symbols pointing up and closed symbols pointing down. In Figure 2.7c, we plot the inclinations as a function of latitude. As expected from a predominantly dipolar field, inclinations cluster around the values for a geocentric axial dipolar field but there is considerable scatter and interestingly the scatter is larger in the southern hemisphere than in the northern one. This is related to the low intensities beneath South America and the Atlantic region seen in Figure 2.5a.

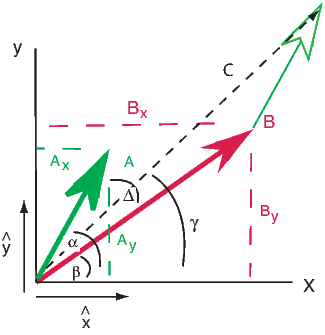

Often we wish to compare directions from distant parts of the globe. There is an inherent difficulty in doing so because of the large variability in inclination with latitude. In such cases it is appropriate to consider the data relative to the expected direction (from GAD) at each sampling site. For this purpose, it is useful to use a transformation whereby each direction is rotated such that the direction expected from a geocentric axial dipole field (GAD) at the sampling site is the center of the equal area projection. This is accomplished as follows:

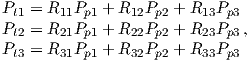

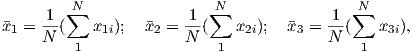

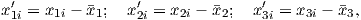

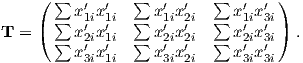

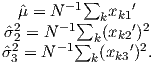

Each direction is converted to Cartesian coordinates (xi) by:

| (2.13) |

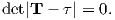

These are rotated to the new coordinate system (x′i, see Appendix A.3.5) by:

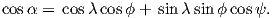

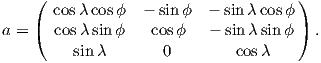

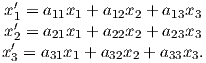

where Id = the inclination expected from a GAD field (tanId = 2tanλ), λ is the site latitude, and α is the inclination of the paleofield vector projected onto the N-S plane (α = tan−1(x3∕x1)). The x′i are then converted to D′,I′ by Equation 2.4.

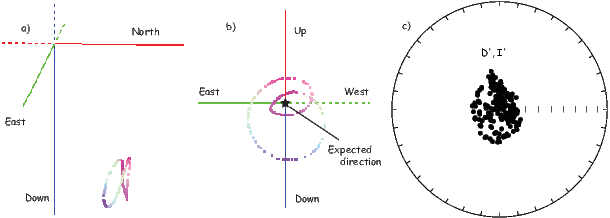

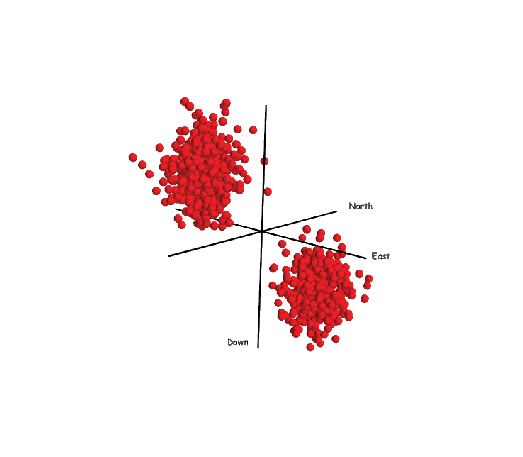

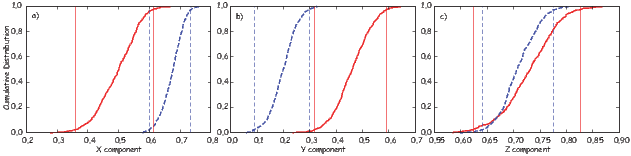

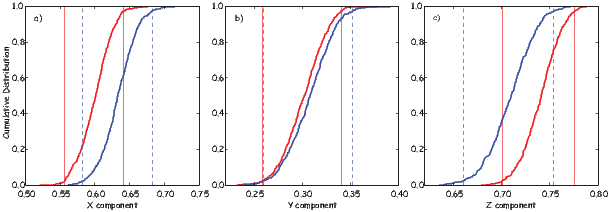

In Figure 2.8a we show the geomagnetic field vectors evaluated at random longitudes along a latitude band of 45∘N. The vectors are shown in their Cartesian coordinates of North, East and Down. In Figure 2.8b we show what happens when we rotate the coordinate system to peer down the direction expected from an axial dipolar field at 45∘N (which has an inclination of 63∘). The vectors circle about the expected direction. Finally, we see what happens to the directions shown in Figure 2.7b after the D′,I′ transformation in Figure 2.8. These are unit vectors projected along the expected direction for each observation in Figure 2.7a. Comparing the equal area projection of the directions themselves (Figure 2.7b) to the transformed directions (Figure 2.8c), we see that the latitudal dependence of the inclinations has been removed.

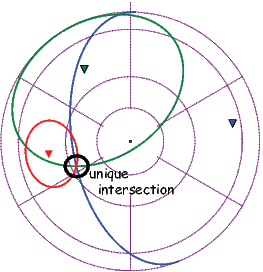

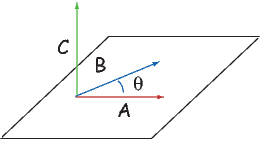

We are often interested in whether the geomagnetic pole has changed, or whether a particular piece of crust has rotated with respect to the geomagnetic pole. Yet, what we observe at a particular location is the local direction of the field vector. Thus, we need a way to transform an observed direction into the equivalent geomagnetic pole.

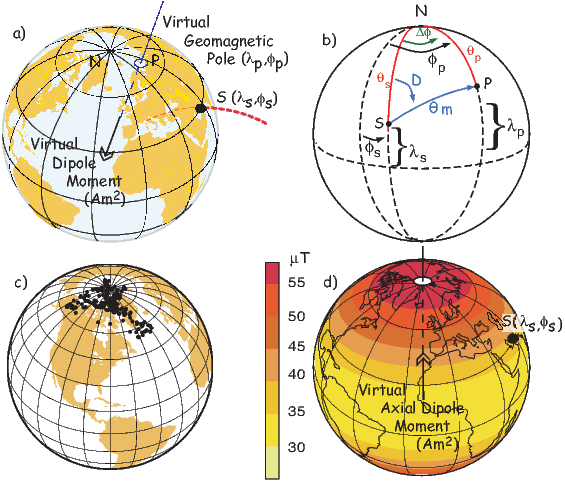

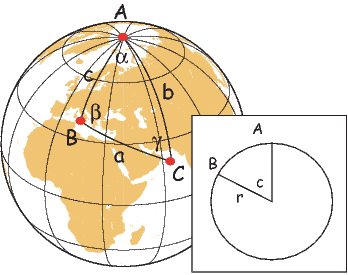

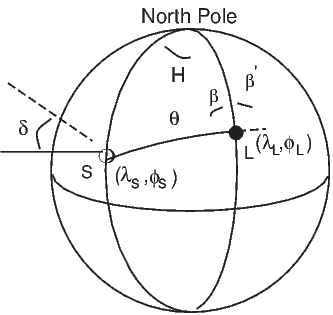

In order to remove the dependence of direction merely on position on the globe, we imagine a geocentric dipole which would give rise to the observed magnetic field direction at a given latitude (λ) and longitude (ϕ). The virtual geomagnetic pole (VGP) is the point on the globe that corresponds to the geomagnetic pole of this imaginary dipole (Figure 2.9a).

Paleomagnetists use the following conventions: ϕ is measured positive eastward from the Greenwich meridian and ranges from 0 → 360∘; θ is measured from the North pole and goes from 0 → 180∘. Of course θ relates to latitude, λ by θ = 90 − λ. θm is the magnetic co-latitude and is given by Equation 2.12. Be sure not to confuse latitudes and co-latitudes. Also, be careful with declination. Declinations between 180∘ and 360∘ are equivalent to D - 360 ∘ which are counter-clockwise with respect to North.

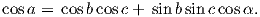

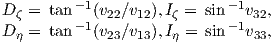

The first step in the problem of calculating a VGP is to determine the magnetic co-latitude θm by Equation 2.12 which is defined in the dipole formula (Equation 2.12). The declination D is the angle from the geographic North Pole to the great circle joining the observation site S and the pole P, and Δϕ is the difference in longitudes between P and S, ϕp −ϕs. Now we use some tricks from spherical trigonometry as reviewed in Appendix A.3.1.

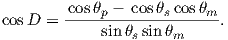

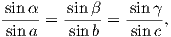

We can locate VGPs using the law of sines and the law of cosines. The declination D is the angle from the geographic North Pole to the great circle joining S and P (see Figure 2.9) so:

| (2.14) |

which allows us to calculate the VGP co-latitude θp. The VGP latitude is given by:

To determine ϕp, we first calculate the angular difference between the pole and site longitude Δϕ.

| (2.15) |

If cosθm ≥ cosθs cosθp, then ϕp = ϕs + Δϕ. However, if cosθm < cosθs cosθp then ϕp = ϕs + 180 − Δϕ.

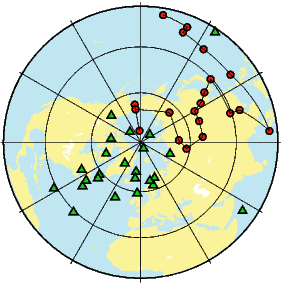

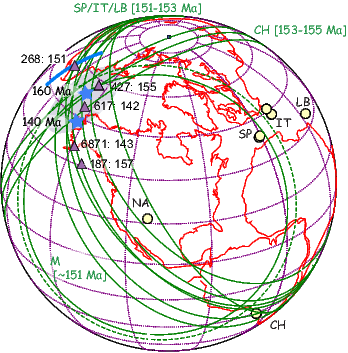

Now we can convert the directions in Figure 2.7b to VGPs (see Figure 2.9c). The grouping of points is much tighter in Figure 2.9c than in the equal area projection because the effect of latitude variations in dipole fields has been removed. If a number of VGPs are averaged together, the average pole position is called a “paleomagnetic pole”. How to average poles and directions is the subject of Chapters 11 and 12.

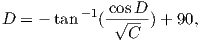

The procedure for calculating a direction from a VGP is a similar procedure to that for calculating the VGP from the direction. Magnetic colatitude θm is calculated in exactly the same way as before and yields inclination from the dipole formula. The declination can be calculated by solving for D in Equation 2.14 as:

This equation works most of the time, but breaks down under some circumstances, for example, when the pole latitude is further to the south than the site latitude. The following algorithm works in the more general case:

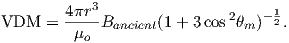

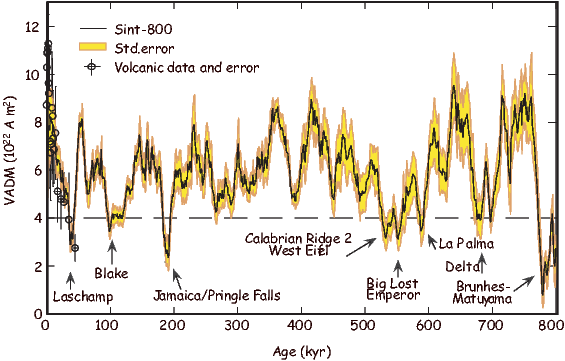

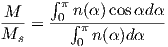

As pointed out earlier, magnetic intensity varies over the globe in a similar manner to inclination. It is often convenient to express paleointensity values in terms of the equivalent geocentric dipole moment that would have produced the observed intensity at a specific (paleo)latitude. Such an equivalent moment is called the virtual dipole moment (VDM) by analogy to the VGP (see Figure 2.9a). First, the magnetic (paleo)co-latitude θm is calculated as before from the observed inclination and the dipole formula of Equation 2.10. Then, following the derivation of Equation 2.11, we have

| (2.16) |

Sometimes the site co-latitude as opposed to magnetic co-latitude is used in the above equation, giving a virtual axial dipole moment (VADM; see Figure 2.9d).

SUPPLEMENTAL READINGS: Merrill et al. (1996), Chapters 1 & 2

For this and future problem sets, you will need the PmagPy package (see section in the Preface at the beginning of the book). After you have installed this and properly set your path, you can import the functions from PmagPy using these commands:

Please consult the Jupyter notebook PmagPy.ipynb for more help on using PmagPy functions within a notebook.

Problem 1

a) Write a python script in an Jupyter notebook that converts declination, inclination and intensity to North, East, and Down. Read in the data in the file Chapter_2/ps2_prob1_data.txt. For this the loadtxt function in the Numpy module will come in handy.

b) Choose 10 random spots on the surface of the earth. You can use the pmag.get_unf to generate a list for you. Then use the ipmag.igrf function to evaluate the declination, inclination and intensity at each of these locations in January 2006. As with all PmagPy programs, and functions, you can find out what they do by printing out the doc string: you can find out what they do by getting the help message:

Calls like these generates help messages which will help you to call the function properly.

c) Take the vectors from the output of Problem 1b and convert them to cartesian coordinates, using the script you wrote in Problem 1a.

Problem 2

a) Plot the IGRF directions from Problem 1b on an equal area projection by hand. Use the equal area net provided in the Appendix. Remember that the outer rim is horizontal and the center of the diagram is vertical. Azimuth goes around the rim with clockwise being positive. Put a thumbtack through the equal area (Schmidt) net and place a piece of tracing paper on the thumbtack. Mark the top of the stereonet with a tick mark on the tracing paper.

To plot a direction, rotate the tick mark of the tracing paper around counter clockwise until the top of the paper is rotated by the declination of the direction. Then count tick marks toward the center from the outer rim (the horizontal) to the inclination angle, plot the point, and rotate back so that the tick is North again. Put all your points on the diagram.

b) Now use the ipmag functions plot_net and plot_di. or write your own! Both plots should look the same....

Problem 3

You went to Wyoming (112∘ W and 36∘ N) to sample some Cretaceous rocks. You measured a direction with a declination of 345∘ and an inclination of 47∘.

a) What direction would you expect from the present (GAD) field?

b) What is the virtual geomagnetic pole position corresponding to the direction you actually measured? [Hint: Use the function pmag.dia_vgp in the PmagPy module or for a challenge, write your own! ]

Scientists in the late 19th century thought that it might be possible to exploit the magnetic record retained in accidental records to study the geomagnetic field in the past. Work in the mid 20th century provided the theoretical and experimental basis for presuming that such materials might retain a record of past geomagnetic fields. There are several books and articles that describe the subject in detail (see e.g., the supplemental readings). We present here a brief overview of theories on how rocks get and stay magnetized. We will begin with magnetism at the atomic level caused by electronic orbits and spins giving rise to induced magnetizations. Then we will see how electronic spins working in concert give rise to permanently magnetized substances (like magnetic minerals) making remanent magnetization possible.

We learned in Chapter 1 that magnetic fields are generated by electric currents. Given that there are no wires leading into or out of permanent magnets, you may well ask, “Where are the currents?” At the atomic level, the electric currents come from the motions of the electrons. From here quantum mechanics quickly gets esoteric, but some rudimentary understanding is helpful. In this chapter we will cover the bare minimum necessary to grasp the essentials of rock magnetism.

In Chapter 1 we took the classical (pre-quantum mechanics) approach and suggested that the orbit of an electron about the nucleus could be considered a tiny electric current with a correspondingly tiny magnetic moment. But quantum physics tells us that this “planetary” view of the atom cannot be true. An electron zipping around a nucleus would generate radio waves, losing energy and eventually would crash into the nucleus.

Apparently, this does not happen, so the classical approach is fatally flawed and we must turn to quantum mechanics.

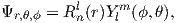

In quantum mechanics, electronic motion is stabilized by the fact that electrons can only have certain energy states; they are quantized. The energy of a given electron can be described in terms of solutions, Ψ, to something called Schrödinger’s wave equation. The function Ψ(r,θ,ϕ) gives the probability of finding an electron at a given position. [Remember from Chapter 2 that r,θ,ϕ are the three spherical coordinates.] It depend on three special quantum numbers (n,l,m):

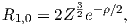

| (3.1) |

The number n is the so-called “principal” quantum number. The Rnl(r) are functions specific to the element in question and the energy state of the electron n. It is evaluated at an effective radius r in atomic units. The Y lm are a fully normalized complex representation of the spherical harmonics introduced in Section 2.2. For each level n, the number l ranges from 0 to n-1 and m from l backwards to −l.

The lowest energy of the quantum wave equations is found by setting n equal to unity and both l and m to zero. Under these conditions, the solution to the wave equation is given by:

| (3.2) |

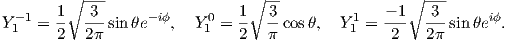

where Z is the atomic number and ρ is 2Zr∕n. Note that at this energy level, there is no dependence of Y on ϕ or θ. Substituting these two equations into Equation 3.1 gives the probability density Ψ for an electron as a function of radius of r. This is sketched as the line in Figure 3.1. Another representation of the same idea is shown in the inset, whereby the density of dots at a given radius reflects the probability distribution shown by the solid curve. The highest dot density is found at a radius of about one atomic unit, tapering off the farther away from the center of the atom. Because there is no dependence on θ or ϕ the probability distribution is a spherical shell. All the l,m = 0 shells are spherical and are often referred to as the 1s, 2s, 3s shells, where the numbers are the energy levels n. A surface with equal probability is a sphere and example of one such shell is shown in Figure 3.2a.

For l = 1, m will have values of -1, 0 and 1 and the Y lm(ϕ,θ)s are given by:

As might be expected, the shells for l = 2 are even more complicated that for l = 1. These shells are called “d” shells and two examples are shown in Figure 3.2c and d.

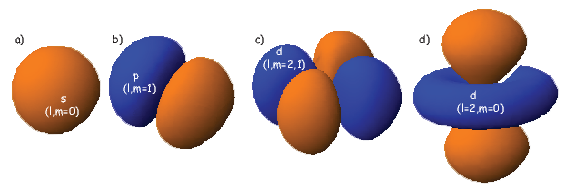

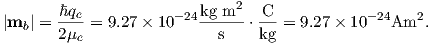

Returning to the tiny circuit idea, somehow the motion of the electrons in their shells acts like an electronic circuit and creates a magnetic moment. In quantum mechanics, the angular momentum vector of the electron L is quantized, for example as integer multiples of ℏ, the “reduced” Planck’s constant (or h _ 2π where h = 6.63 x 10−34 Js). The magnetic moment arising from the orbital angular momentum is given by:

| (3.3) |

This is known as the Bohr magneton.

So far we have not mentioned one last quantum number, s. This is the “spin” of the electron and has a value of ±1 2. The spin itself produces a magnetic moment which is given by 2smb, hence is numerically identical to that produced by the orbit.

Atoms have the same number of electrons as protons in order to preserve charge balance. Hydrogen has but one lonely electron which in its lowest energy state sits in the 1s electronic shell. Helium has a happy pair, so where does the second electron go? To fill in their electronic shells, atoms follow three rules:

Each unpaired spin has a moment of one Bohr magneton mb. The elements with the most unpaired spins are the transition elements which are responsible for most of the paramagnetic behavior observed in rocks. For example, in Figure 3.3 we see that Mn has a structure of: (1s22s22p63s23p6)3d54s2, hence has five unpaired spins and a net moment of 5 mb. Fe has a structure of (1s22s22p63s23p6)3d64s2 with a net moment of 4 mb, In minerals, the transition elements are in a variety of oxidation states. Fe commonly occurs as Fe2+ and Fe3+. When losing electrons to form ions, transition metals lose the 4s electrons first, so we have for example, Fe3+ with a structure of (1s22s22p63s23p6)3d5, or 5 mb. Similarly Fe2+ has 4 mb and Ti4+ has no unpaired spins. Iron is the main magnetic species in geological materials, but Mn2+ (5 mb) and Cr3+ (3 mb) occur in trace amounts.

We have learned that there are two sources of magnetic moments in electronic motions: the orbits and the (unpaired) spins. These moments respond to external magnetic fields giving rise to an induced magnetization, a phenomenon alluded to briefly in Chapter 1. We will consider first the contribution of the electronic orbits.

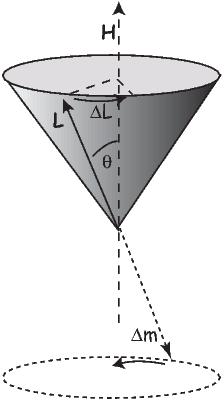

The angular momentum of electrons is quantized in magnitude but also has direction (see L in Figure 3.4). The angular momentum vector has an associated magnetic moment vector mb. A magnetic field H exerts a torque on the moment, which nudges it (and the momentum vector associated with it) to the side (ΔL). L therefore will precess around the magnetic field direction, much like a spinning top precesses around the direction of gravity. The precession of L is called Larmor precession.

The changed momentum vector from Larmor precession in turn results in a changed magnetic moment vector Δm. The sense of the change in net moment is always to oppose the applied field. Therefore, the response of the magnetic moments of electronic orbitals creates an induced magnetization MI that is observable outside the substance; it is related to the applied field by:

We learned in Chapter 1 that the proportionality between induced magnetization and the applied field is known as the magnetic susceptibility. The ratio MI∕H for the response of the electronic orbitals is termed the diamagnetic susceptibility χd; it is negative, essentially temperature independent and quite small. This diamagnetic response is a property of all matter, but for substances whose atoms possess atomic magnetic moments, diamagnetism is swamped by effects of magnetic fields on the atomic magnetic moments. In the absence of unpaired electronic spins, diamagnetic susceptibility dominates the magnetic response. Common diamagnetic substances include quartz (SiO2), calcite (CaCO3) and water (H2O). The mass normalized susceptibility of quartz is -0.62 x 10−9 m3kg−1 to give you an idea of the magnitudes of these things.

In many geological materials, the orbital contributions cancel out because they are randomly oriented with respect to one another and the magnetization arises from the electronic spins. We mentioned that unpaired electronic spins behave as magnetic dipoles with a moment of one Bohr magneton. In the absence of an applied field, or in the absence of the ordering influence of neighboring spins which are known as exchange interactions, the electronic spins are essentially randomly oriented. An applied field acts to align the spins which creates a net magnetization equal to χpH where χp is the paramagnetic susceptibility. For any geologically relevant conditions, the induced magnetization is linearly dependent on the applied field. In paramagnetic solids, atomic magnetic moments react independently to applied magnetic fields and to thermal energy. At any temperature above absolute zero, thermal energy vibrates the crystal lattice, causing atomic magnetic moments to oscillate rapidly in random in orientations. In the absence of an applied magnetic field, atomic moments are equally distributed in all directions with a resultant magnetization of zero.

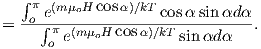

A useful first order model for paramagnetism was worked out by P. Langevin in 1905. (Of course in messy reality things are a bit more complicated, but Langevin theory will work well enough for us at this stage.) Langevin theory is based on a few simple premises:

| (3.4) |

Magnetic energy is at a minimum when the magnetic moment is lined up with the magnetic field.

Consider an atomic magnetic moment, (m = 2mb = 1.85×10−23 Am2), in a magnetic field of 10−2 T, (for reference, the largest geomagnetic field at the surface is about 65 μT – see Chapter 2). The aligning energy is therefore mB = 1.85 × 10−25 J). However, thermal energy at 300K (traditionally chosen as a temperature close to room temperature providing easy arithmetic) is Boltzmann’s constant times the temperature, or about 4 x 10−21 J. So thermal energy is several orders of magnitude larger than the aligning energy and the net magnetization is small even in this rather large (compared to the Earth’s field) magnetizing field.

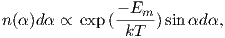

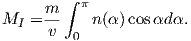

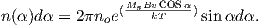

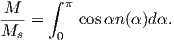

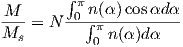

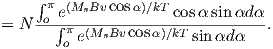

Using the principles of statistical mechanics, we find that the probability density of a particular magnetic moment having a magnetic energy of Em is given by:

| (3.5) |

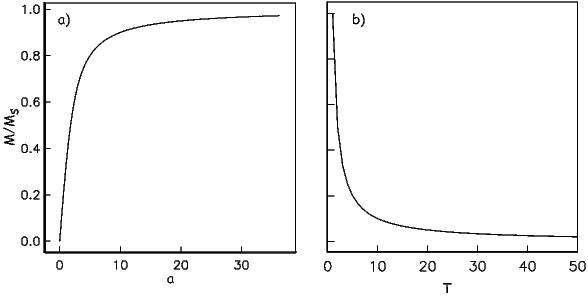

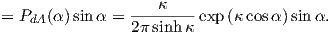

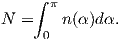

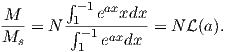

From this we see that the degree of alignment depends exponentially on the ratio of magnetic energy to thermal energy. The degree of alignment with the magnetic field controls the net magnetization M. When spins are completely aligned, the substance has a saturation magnetization Ms. The probability density function leads directly to the following relation (derived in Appendix A.2.1):

![M 1

--- = [coth a − -] = ℒ(a).

Ms a](http://ltauxe.github.io/Essentials-of-Paleomagnetism/WebBook373x.png) | (3.6) |

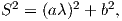

where a = mB∕kT. The function enclosed in square brackets is known as the Langevin function (ℒ).

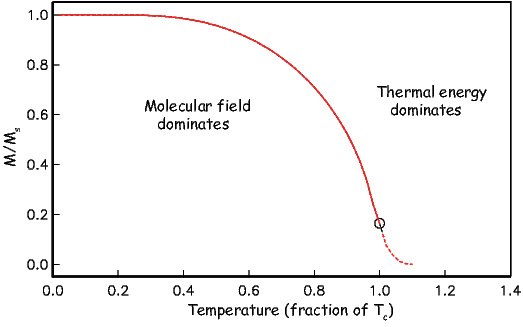

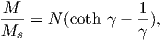

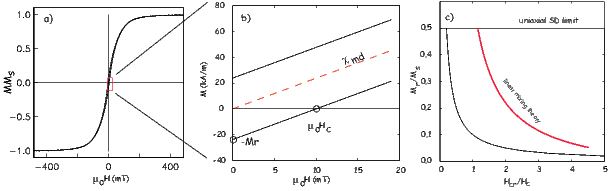

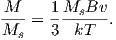

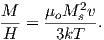

Equation 3.6 is plotted in Figure 3.5a and predicts several intuitive results: 1) M = 0 when B = 0 and 2) M∕Ms = 1 when the applied magnetic field is infinite. Furthermore, M is some 90% of Ms when mB is some 10-20 times kT. When kT >> mB,ℒ(a) is approximately linear with a slope of ∼ 1∕3. At room temperature and fields up to many tesla, ℒ(a) is approximately mB∕3kT. If the moments are unpaired spins (m = mb), then the maximum magnetization possible (Ms) is given by the number of moments N, their magnitude (mb) normalized by the volume of the material v or Ms = Nmb∕v, and

Please note that we have neglected all deviations from isotropy including quantum mechanical effects as well as crystal shape, lattice defects, and state of stress. These complicate things a little, but to first order the treatment followed here provides a good approximation. We can rewrite the above equation as:

| (3.7) |

To first order, paramagnetic susceptibility χp is positive, larger than diamagnetism and inversely proportional to temperature. This inverse T dependence (see Figure 3.5b) is known as Curie’s law of paramagnetism. The paramagnetic susceptibility of, for example, biotite is 790 x 10−9 m3 kg−1, or about three orders of magnitude larger than quartz (and of the opposite sign!).

We have considered the simplest case here in which χ can be treated as a scalar and is referred to as the bulk magnetic susceptibility χb. In detail, magnetic susceptibility can be quite complicated. The relationship between induced magnetization and applied field can be affected by crystal shape, lattice structure, dislocation density, state of stress, etc., which give rise to possible anisotropy of the susceptibility. Furthermore, there are only a finite number of electronic moments within a given volume. When these are fully aligned, the magnetization reaches saturation. Thus, magnetic susceptibility is both anisotropic and non-linear with applied field.

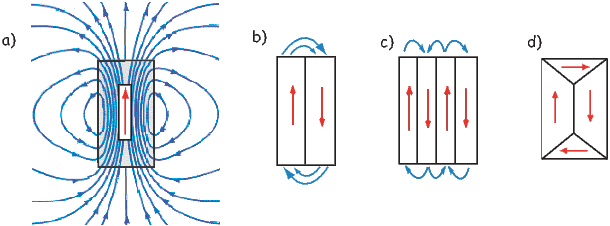

Some substances give rise to a magnetic field in the absence of an applied field. This magnetization is called remanent or spontaneous magnetization, also loosely known as ferromagnetism (sensu lato). Magnetic remanence is caused by strong interactions between neighboring spins that occur in certain crystals.

The so-called exchange energy is minimized when the spins are aligned parallel or anti-parallel depending on the details of the crystal structure. Exchange energy is a consequence of the Pauli exclusion principle (no two electrons can have the same set of quantum numbers). In the transition elements, the 3d orbital is particularly susceptible to exchange interactions because of its shape and the prevalence of unpaired spins, so remanence is characteristic of certain crystals containing transition elements with unfilled 3d orbitals.

In oxides, oxygen can form a bridge between neighboring cations which are otherwise too far apart for direct overlap of the 3d orbitals in a phenomenon known as superexchange. In Figure 3.6 the 2p electrons of the oxygen are shared with the neighboring 3d shells of the iron ions. Pauli’s exclusion principle means that the shared electrons must be antiparallel to each of the electrons in the 3d shells. The result is that the two cations are coupled. In the case shown in Figure 3.6 there is an Fe2+ ion coupled antiparallel to an Fe3+ ion. For two ions with the same charge, the coupling will be parallel. Exchange energies are huge, equivalent to the energy associated with the same moment in a field of the order of 1000 T. [The largest field available in the Scripps paleomagnetic laboratory is about 2.5 T, and that only fleetingly.]

As temperature increases, crystals expand and exchange becomes weaker. Above a temperature characteristic of each crystal type (known as the Curie temperature Tc), cooperative spin behavior disappears entirely and the material becomes paramagnetic.

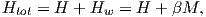

While the phenomenon of ferromagnetism results from complicated interactions of neighboring spins, it is useful to think of the ferromagnetic moment as resulting from a quasi-paramagnetic response to a huge internal field. This imaginary field is termed the Weiss molecular field Hw. In Weiss theory, Hw is proportional to the magnetization of the substance, i.e.,

where β is the constant of proportionality. The total magnetic field that the substance experiences is:

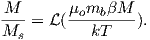

where H is the external field. By analogy to paramagnetism, we can substitute a = μomb(Htot)∕kT) for H in Langevin function:

| (3.8) |

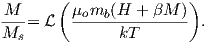

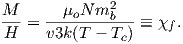

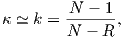

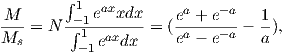

For temperatures above the Curie temperature Tc (i.e. T − Tc > 0) there is by definition no internal field, hence βM is zero. Substituting Nmb∕v for Ms, and using the low-field approximation for ℒ(a), Equation 3.8 can be rearranged to get:

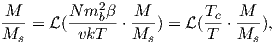

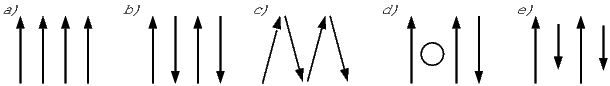

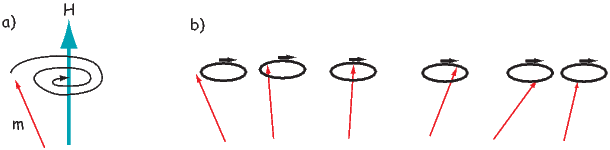

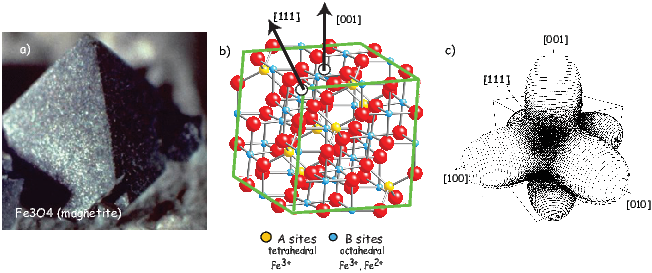

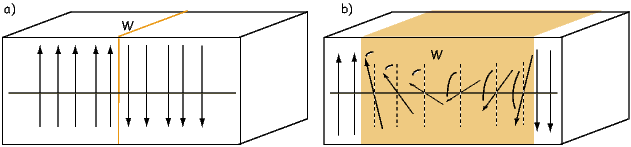

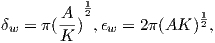

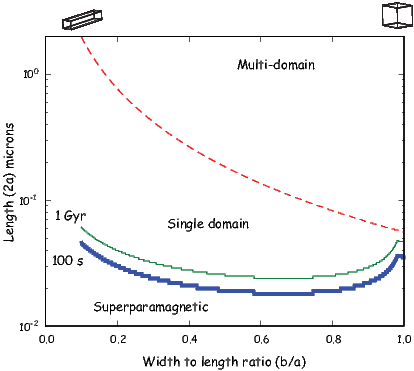

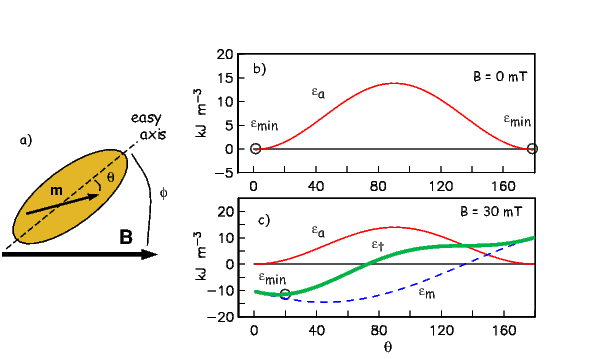

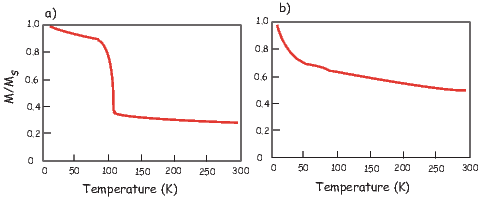

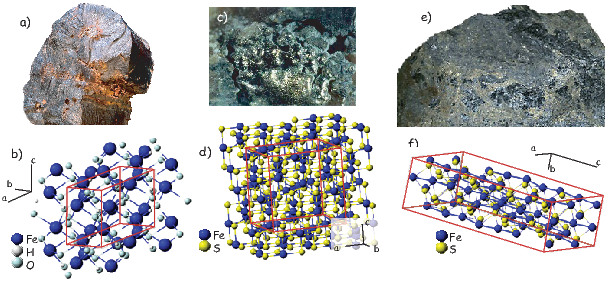

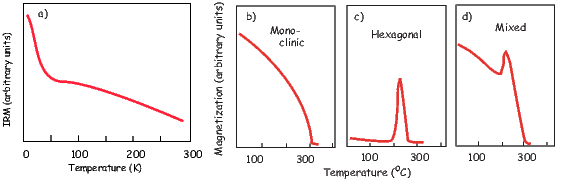

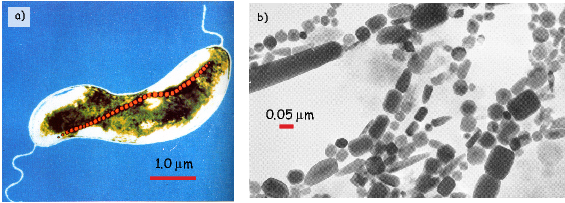

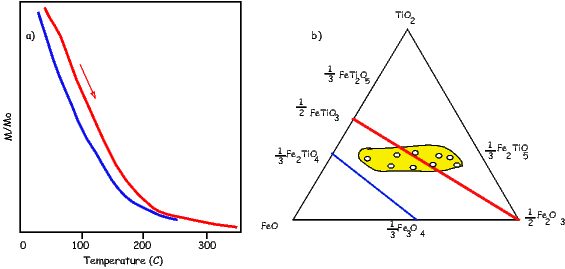

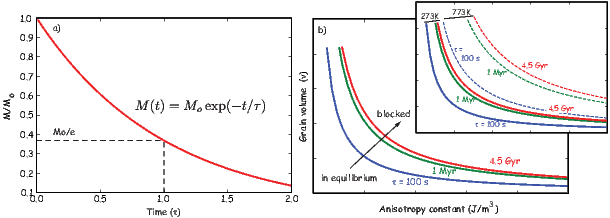

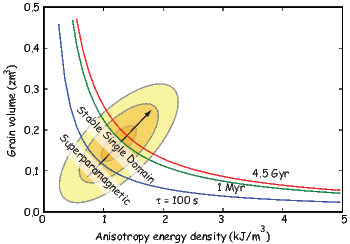

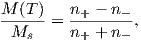

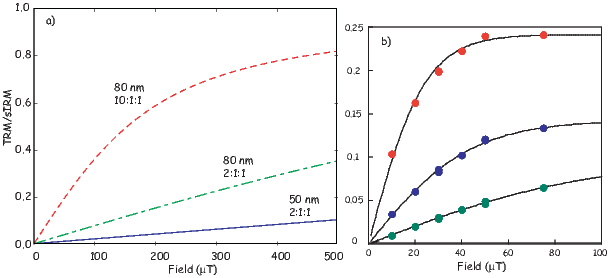

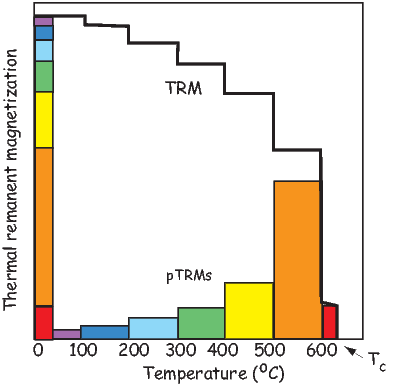

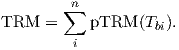

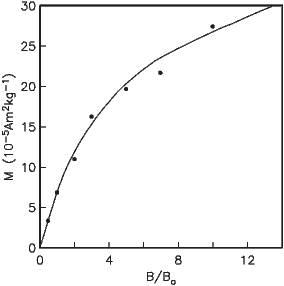

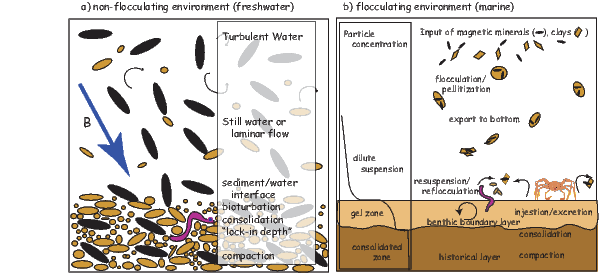

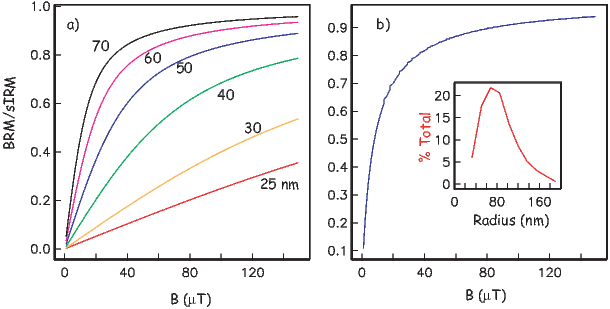

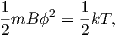

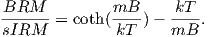

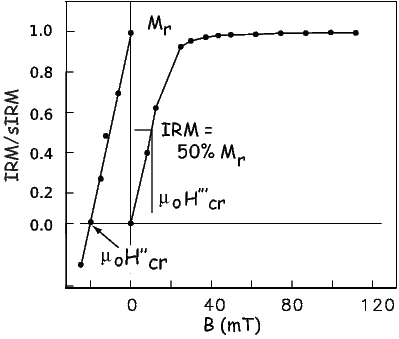

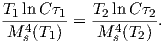

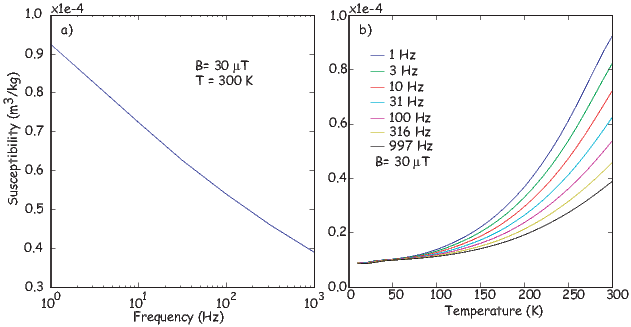

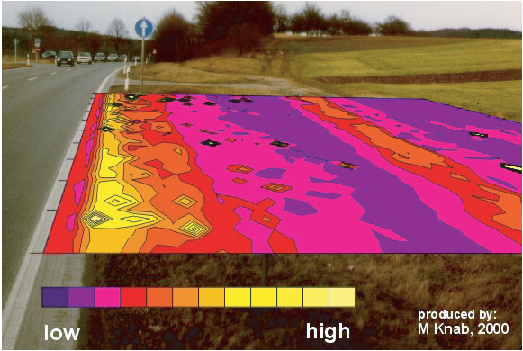

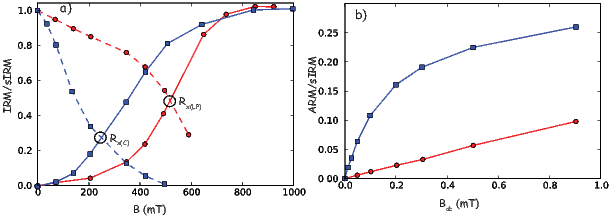

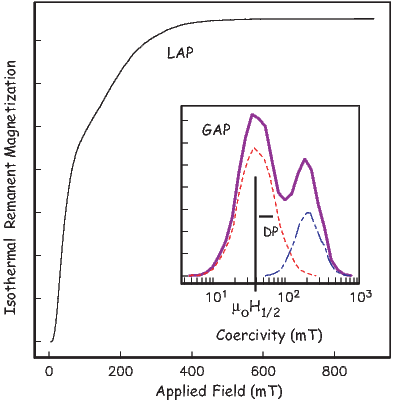

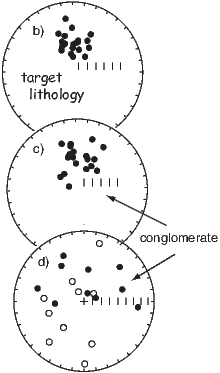

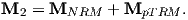

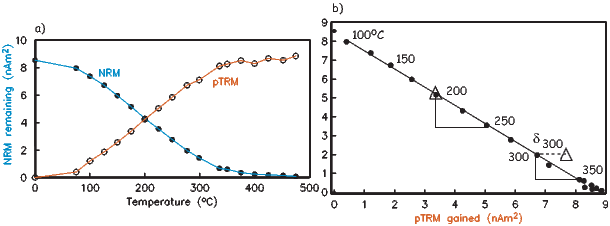

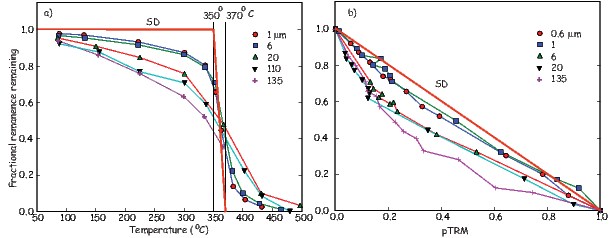

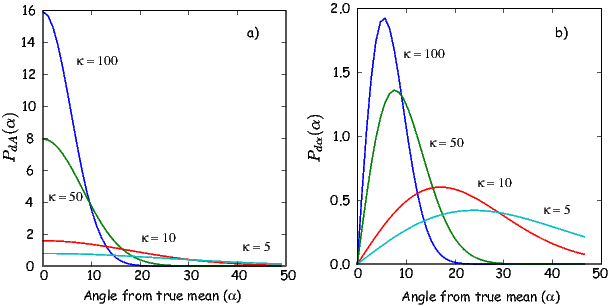

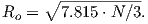

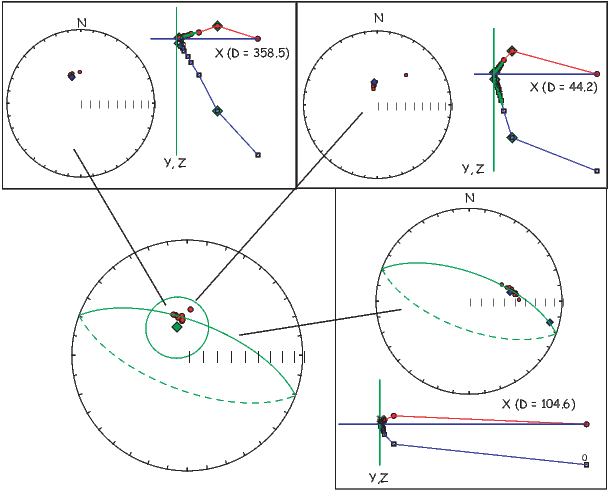

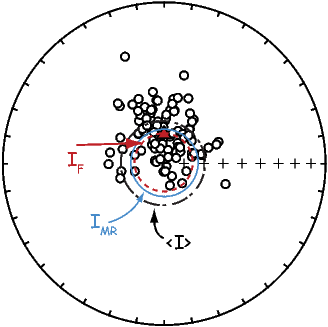

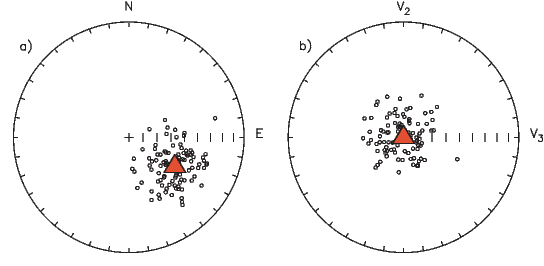

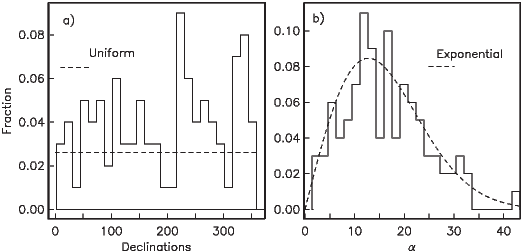

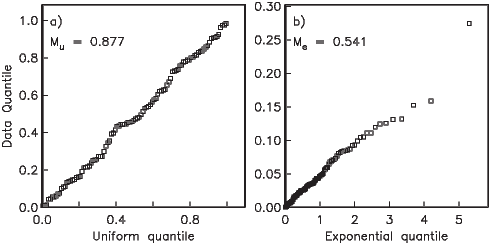

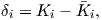

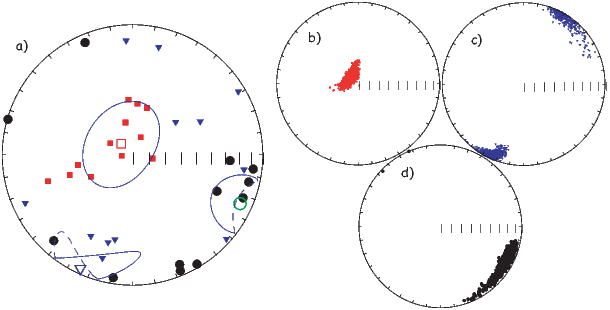

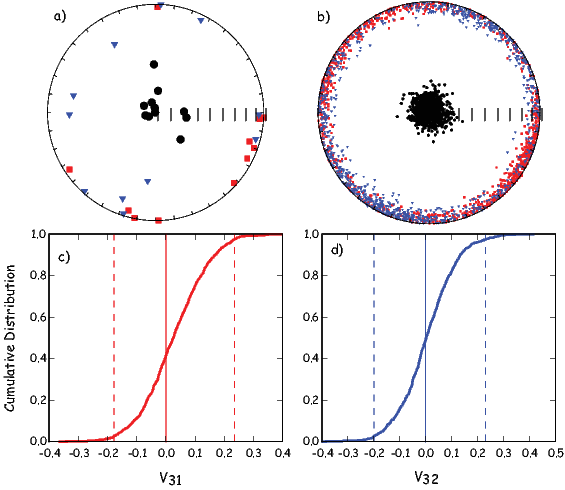

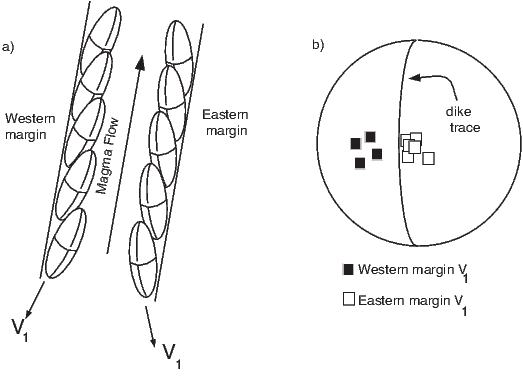

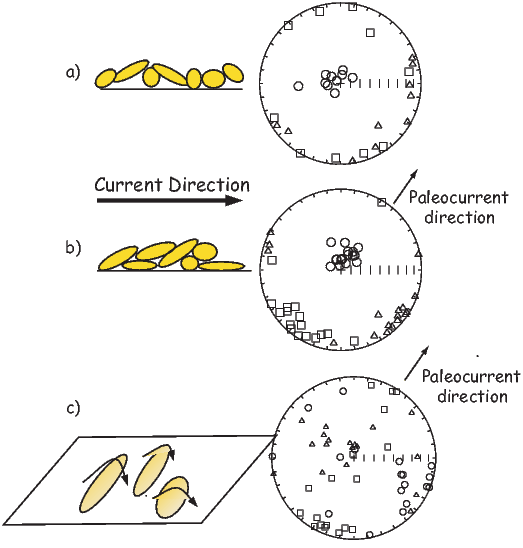

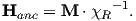

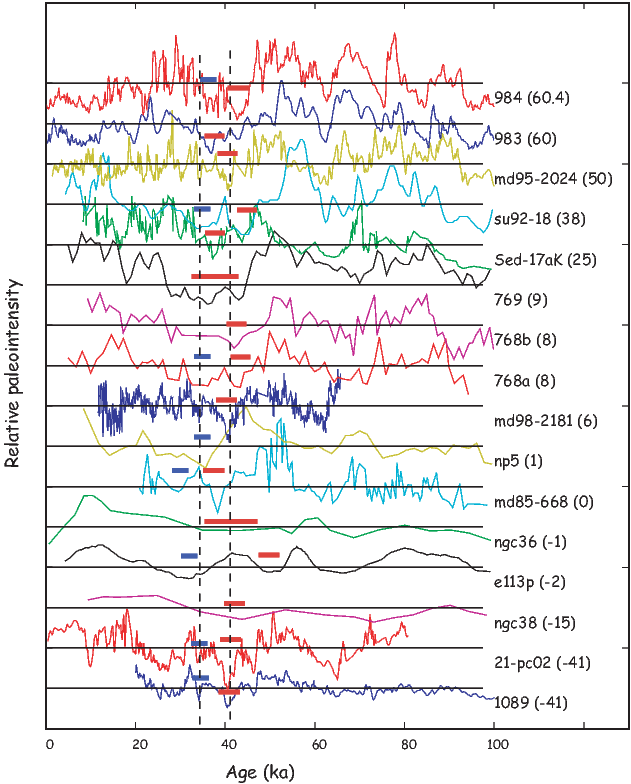

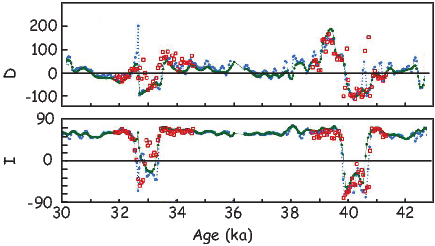

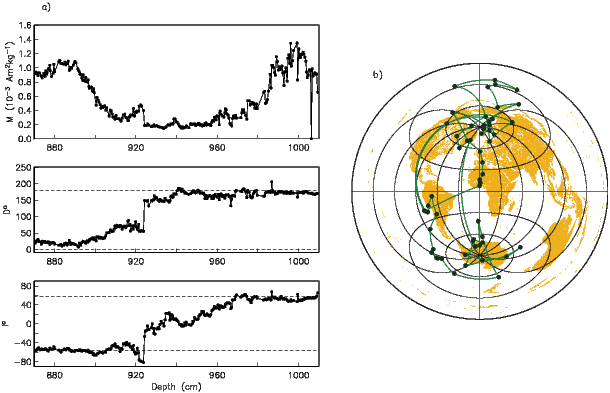

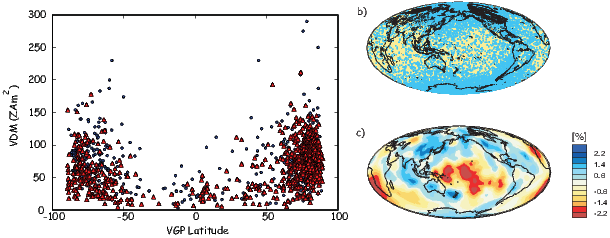

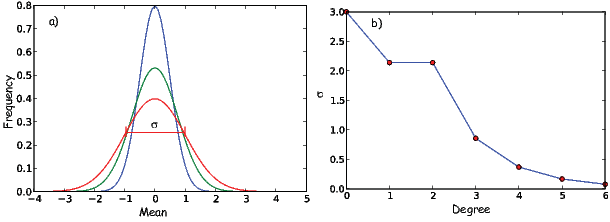

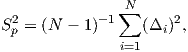

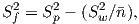

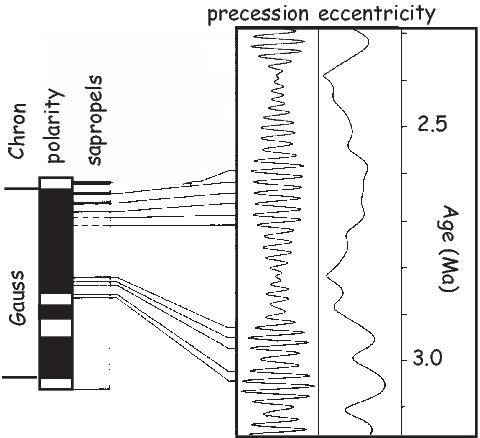

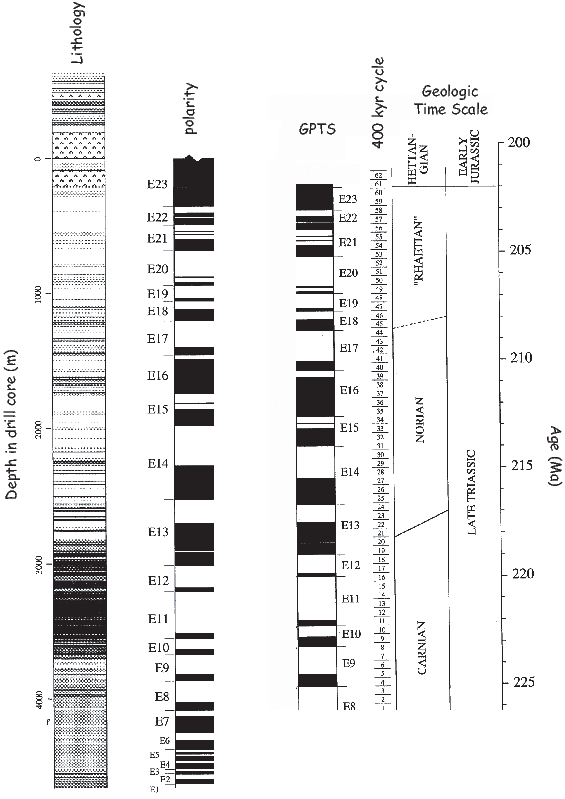

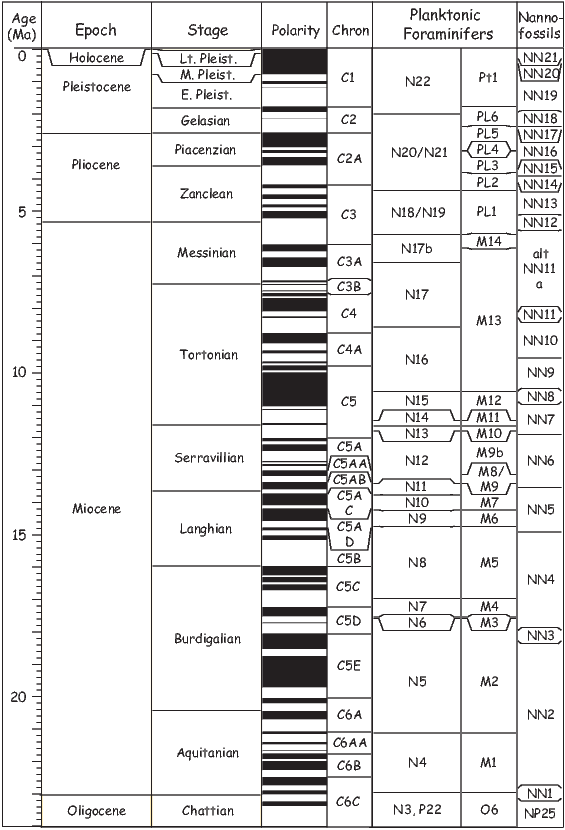

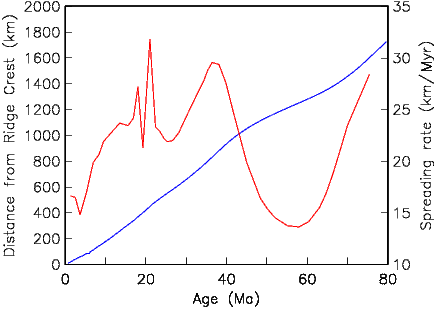

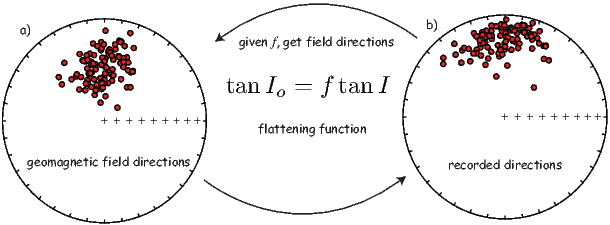

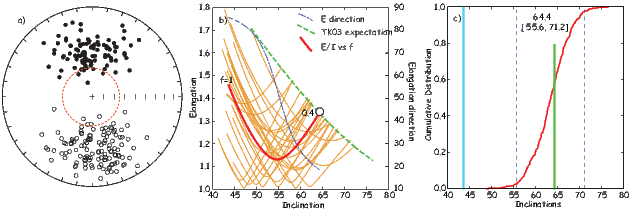

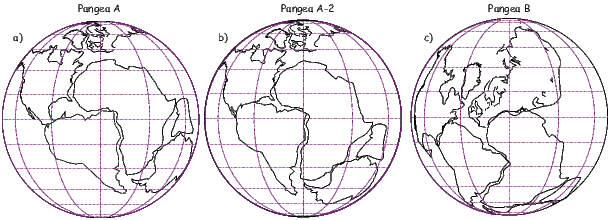

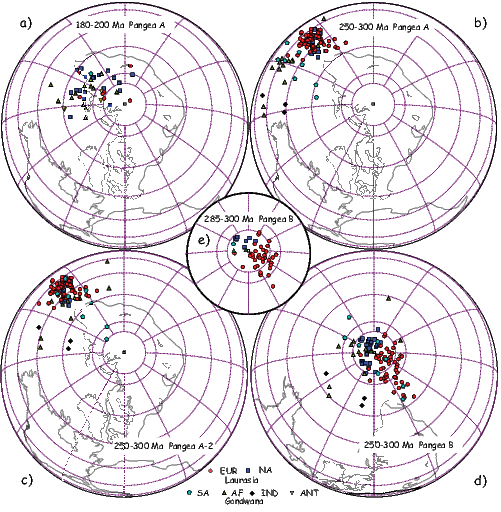

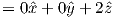

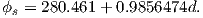

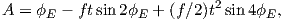

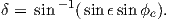

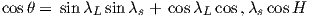

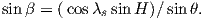

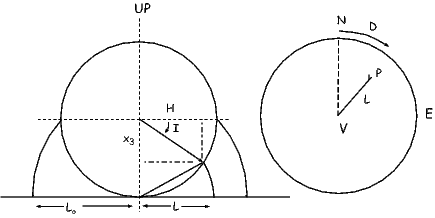

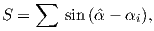

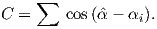

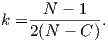

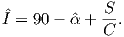

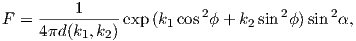

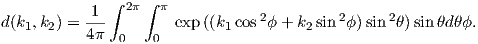

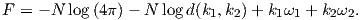

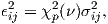

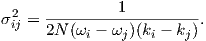

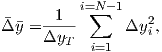

| (3.9) |